Capgemini has just launched version 2 of the Capgemini Intelligent Automation Platform (CIAP) to assist organizations in offering an enterprise-wide and AI-enabled approach to their automation initiatives across IT and business operations. In particular, CIAP offers:

- Reduced TCO and increased resilience through use of shared third-party components

- Support for AIOps and DevSecOps

- A strong focus on problem elimination and functional health checks.

Reduced TCO & increased ability to scale through use of a common automation platform

A common problem with automation initiatives is their distributed nature across the enterprise, with multiple purchasing points and a diverse set of tools and governance, reducing overall RoI and the enterprise's ability to scale automation at speed.

Capgemini aims to address these issues through CIAP, a multi-tenanted cloud-based automation solution that can be used to deliver "automation on tap." It consists of an orchestration and governance platform and the UiPath intelligent automation platform. Each enterprise has a multi-tenanted orchestrator providing a framework for invoking APIs and client scripts together with dedicated bot libraries and a segregated instance of UiPath Studio. A central source of dashboards and analytics is built into the front-end command tower.

While UiPath is provided as an integral part of CIAP, CIAP also provides APIs to integrate other Intelligent Automation platforms with the CIAP orchestration platform, enabling enterprises to continue to optimize the value of their existing use cases.

The central orchestration feature within CIAP removes the need for a series of point solutions, allowing automations to be more end-to-end in scope and removing the need for integration by the client organization. For example, within CIAP, event monitoring can trigger ticket creation, which in turn can automatically trigger a remediation solution.

Another benefit of this shared component approach is reducing TCO by improved sharing of licenses. The client no longer has to duplicate tool purchasing and dedicate components to individual automations; the platform and its toolset can be shared across each of infrastructure, applications, and business services departments within the enterprise.

CIAP is offered on a fixed-price subscription-based model based on "typical" usage levels, with additional charges only applicable where client volumes necessitate additional third-party execution licenses or storage beyond those already incorporated in the package.

Support for AIOps & DevSecOps

CIAP began life focused on application services, and the platform provides support for AIOps and DevSecOps, not just business services.

In particular, CIAP incorporates AIOps using the client's application infrastructure logs for reactive and predictive resolutions. In terms of reactive resolutions, the AIOps can identify the dependent infrastructure components and applications, identify the root cause, and apply any automation available.

CIAP also ingests logs and alerts and uses algorithms to correlate them, so that the resolver group only needs to address a smaller number of independent scenarios rather than each alert individually. The platform can also incorporate the enterprise's known error databases so that if an automated resolution does not exist, the platform can still recommend the most appropriate knowledge objects for use in resolution.

Future enhancements include increased emphasis on proactive capacity planning, including proactive simulation of the impact of change in an estate and enhancing the platform's ability to predict a greater range of possible incidents in advance. Capgemini is also enhancing the range of development enablers within the platform to establish CIAP as a DevSecOps platform, supporting the life cycle from design capture through unit and regression testing, all the way to release within the platform, initially starting with the Java and .NET stacks.

A strong focus on problem elimination & functional health checks

Capgemini perceives that repetitive task automation is now well understood by organizations, and the emphasis is increasingly on using AI-based solutions to analyze data patterns and then trigger appropriate actions.

Accordingly, to extend the scope of automation beyond RPA, CIAP provides built-in problem management capability, with the platform using machine learning to analyze historical tickets to identify the causes and recurring problems and, in many cases, initiate remediation automatically. CIAP then aims to reduce the level of manual remediation automation on an ongoing basis by recommending emerging automation opportunities.

In addition to bots addressing incident and problem management, the platform also has a major emphasis within its bot store on sector-specific bots providing functional health checks for sectors including energy & utilities, manufacturing, financial services, telecoms, life sciences, and retail & CPG. One example in retail is where prices are copied from a central system to store PoS systems daily. However, unreported errors during this process, such as network downtime, can result in some items remaining incorrectly priced in a store PoS system. In response to this issue, Capgemini has developed a bot that compares the pricing between upstream and downstream systems at the end of each batch pricing update, alerting business users, and triggering remediation where discrepancies are identified. Finally, the bot checks that remediation was successful and updates the incident management tool to close the ticket.

Similarly, Capgemini has developed a validation script for the utilities sector, which identifies possible discrepancies in meter readings leading to revenue leakage and customer dissatisfaction. For the manufacturing sector, Capgemini has developed a bot that identifies orders that have gone on credit hold, and bots to assist manufacturers in shop floor capacity planning by analyzing equipment maintenance logs and manufacturing cycle times.

CIAP has ~200 bots currently built into the platform library.

A final advantage of using platforms such as CIAP beyond their libraries and cost advantages is that they provide operational resilience by providing orchestrated mechanisms for plugging in the latest technologies in a controlled and cost-effective manner while unplugging or phasing out previous generations of technology, all of which further enhances time to value. This is increasingly important to enterprises as their automation estates grow to take on widespread and strategic operational roles.

]]>

Q&A Part 2

JW: What are the main supply chain flows that supply chain executives should look to address?

JJ: Traditionally, there are three main supply chain flows that benefit from automation:

- Physical flow (flow of goods from, e.g., from a DC to a retailer, the most visible and tangible flow) – some more obvious than others, such as parcels delivered to your door or raw materials arriving at a plant. To address these issues, the industry is getting ready (or is ready) to adopt drones, automated trucking, and automated guided vehicles (AGV). But to achieve true end-to-end physical delivery, major infrastructure and regulatory changes are yet to happen to fully unleash the potential of physical automation in this field. In the short-term, however, let’s not forget the critical paper flow associated with these flows of goods, such as a courier sending Bills of Lading to a given port on time for customs clearance and vessel departure, a procedure that often leads to unexpected delays

- Financial flow (flow of money) – here the industry is adopting new technologies to palliate common issues, e.g., interbanking communication in support of letters of credit

- Information flow (flow of information connecting systems and stakeholders alike and ensuring that relevant data is shared, ideally in real-time, between, e.g., a supplier, a manufacturer, and its end customers) – this is the information you share via email/spreadsheets or through a platform connecting you with your ecosystem partners. This flow is also a perfect candidate for automation, starting with a platform to break silos or for smaller transformation with tactical RPA deployments. More ambitious firms will also want to look into blockchain solutions to, for instance, transparently access information about their suppliers and ensure that they are compliant (directly connecting to the blockchain containing information provided by the certification institution such as ISO). While the need for drones and automated trucking/shipping is largely contingent on infrastructure changes, regulations, and incremental discoveries, the financial and information flows have reached a degree of maturity at scale that has already been generating significant quantifiable benefits for years.

JW: Can you give me examples of where Capgemini has deployed elements of an autonomous supply chain?

JJ: Capgemini has developed capabilities to help our clients not only design but also run their services following best-practice methodologies blending optimal competencies, location mix, and processes powered by intelligent automation, analytics, and world-renowned platforms. We have helped clients transform their processes, and we have run them from our centers of excellence/delivery centers to maximize productivity.

Two examples spring to mind:

Touchless planning for an international FMCG company:

Our client had maxed out their forecasting capabilities using standard ERP embedded forecasting modules. Capgemini leveraged our Demand Planning framework powered by intelligent automation and combined it with best-in-class machine learning platforms to increase the client’s forecasting accuracy and lower planning costs by over 25%, and this company is now moving to a touchless planning function.

Automated order validation and delivery note for an international chemical manufacturing company:

Our client was running fulfillment operations internally at a high operating cost and low productivity. Capgemini transformed the client’s operations and created a lean team in a cost-effective nearshore location. On top of this, we leveraged intelligent automation to create a touchless purchase/sales order to delivery note creation flow, checking that all required information is correct, and either raising exceptions or passing on the data further down the process to trigger the delivery of required goods.

JW: What are the key success factors for enterprises starting the journey to autonomous supply chains?

JJ: Moving to an autonomous supply chain is a major business and digital transformation, not a standalone technology play, and so corporate culture is highly important in terms of the enterprise being prepared to embrace significant change and disruption and to operate in an agile and dynamic manner.

To ensure business value, you also need a consistent and holistic methodology such as Capgemini’s Digital Global Enterprise Model, which combines Six Sigma-based optimization approaches with a five senses-driven automation model, a framework for the deployment of intelligent automation and analytics technology.

Also, a lot depends on the quality of the supply chain data. Enterprises need to get the data right and master their supply chain data because you can’t drive autonomy if the data is not readily available, up-to-date in real-time, consistent, and complete. Supply chain and logistics is not so much about moving physical goods; it's been about moving information for decades. A bit of automation here and there will not make your supply chain touchless and autonomous. It requires integration and consolidation first before you can aim for autonomy.

JW: And how should enterprises start to undertake the journey to autonomous supply chains?

JJ: The first step is to build the right level of skill and expertise within the supply chain personnel. Scaling too fast without considering the human factor will result in a massive mess and a dip in supply chain performance. Also, it is important to set a culture of continuous improvement and constant innovation, for example, by leveraging a digitally augmented workforce.

Secondly, the right approach is to make elements of the supply chain touchless. Autonomy will happen as a staged approach, not as a big bang. It’s a journey. Focus on high-impact areas first, enable quick wins, and start with prototyping. So, supply chain executives should identify those pockets of excellence that are close to being ready, or which can be made ready, to be made touchless, and where you can drive supply chain autonomy.

One approach to identifying the most appropriate initiatives is to plot them against two axes: the y-axis being the effort to get there and the x-axis being the impact that can be achieved. This will help identify pockets of value that can be addressed relatively quickly, harvesting some quick wins first. As you progress down this journey, further technologies may mature that allow you to address the last pieces of the puzzle and get to an extensively autonomous supply chain.

JW: Which technologies should supply chain executives be considering to underpin their autonomous supply chains in the future?

JJ: Beyond fundamental technologies such as RPA, machine learning has considerable potential to help, for example, in demand planning to increase accuracy, and in fulfillment to connect interaction and decision-making.

Technologies now exist that can, for example, both recognize and interpret the text in an email and automatically respond and send all the information required; for example, for order processing, populating orders automatically, with the order validated against inventory and with delivery prioritized according to corporate rules – and all this without human intervention. This can potentially be extended further with automated carrier bookings against rules. Of course, this largely applies to the “happy flows” at the moment, but there are also proven practices to increase the proportion of “happy orders”.

The level of autonomy in supply chain fulfillment can also be increased by using analytics to monitor supply chain fulfillment and predict potential exceptions and problems, then either automating mitigation or proposing next-best actions to supply chain decision-makers.

This is only the beginning, as AI and blockchain still have a long way to go to reach their potential. Companies that harness their power now and are prepared to scale will be the ones coming out on top.

JW: Thank you, Joerg. I’m sure our readers will find considerable food for thought here as they plan and undertake their journeys to autonomous supply chains.

]]>

Introduction

Supply chain management is an area currently facing considerable pressure and is a key target for transformation. NelsonHall research shows that less than a third of supply chain executives in major enterprises are highly satisfied with, for example, their demand forecasting accuracy and their logistics planning and optimization, and that the majority perceive there to be considerable scope to reduce the levels of manual touchpoints and hand-offs within their supply chain processes as they look to move to more autonomous supply chains.

Accordingly, NelsonHall research shows that 86% of supply chain executives consider the transformation of their supply chains over the next two years to be highly important. This typically involves a redesign of the supply chain to maximize available data sources to deliver more efficient workflow and goods handling, improving connectivity within the supply chain to enable more real-time decision-making, and improving the competitive edge with better decision-making tools, analytics, and data sources supporting optimized storage and transport services.

Key supply chain transformation characteristics critical for driving supply chain autonomy that are sought by the majority of supply chain executives include supply chain standardization, end-to-end visibility of supply chain performance, ability to predict, sense, and adjust in real-time, and closed-loop adaptive planning across functions.

At the KPI level, there are particularly high expectations of high demand forecasting accuracy, improved logistics planning and optimization, leading to higher levels of fulfillment reliability; and enhanced risk identification leading to operational cost and working capital reduction.

So, overall, supply chain executives are typically seeking a reduction in supply chain costs, more effective supply chain processes and organization, and improved service levels.

Q&A Part 1

JW: Joerg, to what extent do you see existing supply chains under pressure?

JJ: From a manufacturer looking for increased supply chain resilience and lower costs to a B2C end consumer obsessed with speed, visibility, and aftersales services, supply chains are now under great pressure to transform and adapt themselves to remain competitive in an increasingly demanding and volatile environment.

Supply chain pressure results from increasing levels of supply chain complexity, higher customer expectations, a more volatile environment (e.g., trade wars, Brexit), difficulty in managing costs, and lack of visibility. In particular, global trade has been in a constant state of exception since 2009, creating a need to increase supply chain resilience via increased agility and flexibility and, in sectors such as fast-moving consumer goods and even automotive, hyper-personalization can mean a lot size of one, starting from procurement all the way through production and fulfillment. At the same time, supply chains are no longer simple “chains” but have talent, financial, and physical flows all intertwined in a DNA-like spiral resulting in a (supply chain) ecosystem with high complexity. All this is often compounded by the low level of transparency caused by manual processes. In response, enterprises need to start the journey to autonomous supply chains. However, many supply chains are still not digitized, so there’s a lot of homework to be done before introducing digitalization and autonomous supply chains.

JW: What do you understand by the term “autonomous supply chain”?

JJ: The end game in an “autonomous supply chain” is a supply chain that operates without human intervention. Just imagine a parcel reaching your home, knowing it didn’t take any human intervention to fulfill your order? How much of this is fiction and how much reality?

Well, some of this certainly depends on major investments and changes to regulations in areas such as sending drones to deliver your parcels, flying over your neighborhood, or loading automated trucks crisscrossing the country with nobody behind the steering wheel; major steps in lowering costs and improving customer satisfaction can already be undertaken using current technologies. Recent surveys show that only a quarter of supply chain leaders perceive that they have reached a satisfactory automation level, leveraging the most innovative end-to-end solutions currently available.

JW: What benefits can companies expect from the implementation of an “autonomous supply chain”?

JJ: Our observations and experience link autonomous supply chains to:

- Lower costs – it is no surprise that supply chain automation already helps to lower costs (and will do even more so in the future), combining FTE savings and lower exception handling costs coupled with productivity and quality gains

- Improved customer satisfaction – as a customer you may ask, why should I care that the processes leading to the delivery of my products are “no touch”, that it required hardly any human intervention? Well, you will when your products are delivered faster, and that from order to delivery your experience was transparent and seamless, requiring no tedious phone calls to locate your product(s) or complains about delivery or invoicing errors!

- Increased revenue – as companies process more, faster, with fewer handling and processing errors along the way, they create added value for their customers and benefit from capacity gains that eventually affect their top line, particularly when operational savings are passed on to lower delivery/product prices, thus allowing for a healthy combination of margin and revenue increase.

We have seen that automation can do far more than simply cut costs and that there are many ways to implement automation at scale without relying on infrastructure/regulation changes (e.g., drones) – for example, by leveraging a digitally augmented workforce. Companies have been launching proofs of concept (POCs) but often struggle to reap the true benefits due to talent shortages, siloed processes, and a lack of a long-term holistic vision.

JW: What hurdles do organizations need to overcome to achieve an autonomous supply chain?

JJ: We have observed that companies often face the following hurdles when trying to create a more autonomous supply chain:

- Lack of visibility and transparency – due to 1) outdated process flows, and 2) siloed information systems often requiring email-based information exchange (back and forth non-standardized spreadsheets, flat files)

- Lack of agility (influencing/impacting the overall resilience of the supply chain) – the inability to execute on insights due to slow information velocity and stiffness in their processes, often focused on functions as opposed to value-added processes cutting across the organization

- Lack of the right talent – difficulty in finding talent in a very competitive industry with new technologies making typical supply chain profiles less relevant and new digital profiles often costly to train and hard to retain

- Lack of centralization and consolidation – leading to high costs, poor productivity, and disjointed technology landscapes, often unable to scale across the organization due to a lack of a holistic transformation approach and proper governance.

One thing that many companies have in common is a lack of ability to deploy automation solutions at scale, cost-effectively. Too often, these projects remain at a POC stage and are parked until a new POC (often technology-driven) comes along and yet again fails to scale properly due to high costs, lack of resources, and lack of strategic vision tied to business outcomes.

In Part 2 of the interview, Joerg Junghanns discusses the supply chain flows that benefit from automation, describes client case examples, and highlights the success factors, adoption approach, and key technologies behind autonomous supply chains.

]]>

In 2016, Atos was awarded a 13-year life & pensions BPO contract by Aegon, taking over from the incumbent Serco and involving the transfer of ~300 people in a center in Lytham St Annes.

The services provided by Atos within this contract include managing end-to-end operations, from initial underwriting through to claims processing, for Aegon's individual protection offering, which comprises life assurance, critical illness, disability, and income protection products (and for which Aegon has 500k customers).

Alongside this deal, Aegon was separately evaluating the options for its closed book life & pensions activity and subsequently went to market to outsource its U.K. closed book business covering 1.4m customers across a range of group and individual policy types. The result was an additional 15-year deal with Atos, signed recently.

Three elements were important factors in the award of this new contract to Atos:

- Transfer of the existing Aegon personnel

- Ability to replatform the policies

- Implementation of customer-centric operational excellence.

Leveraging Edinburgh-Based Delivery to Offer Onshore L&P BPS Service

The transfer of the existing Aegon personnel and maintaining their presence in Edinburgh was of high importance to Aegon, the union, and the Scottish government. The circa 800 transferred personnel will continue to be housed at the existing site when transfer takes place in summer 2019, with Atos sub-leasing part of Aegon’s premises. This is possible for Atos since it is the company’s first life closed block contract and the company is looking to win additional deals in this space over the next few years (and will be going to market with an onshore rather than offshore-based proposition).

Partnering with Sapiens to Offer Platform-Based Service

While (unlike some other providers of L&P BPS services) Atos does not own its own life platform, the company does recognize that platform-based services are the future of closed book L&P BPS. Accordingly, the company has partnered with Sapiens, and the Sapiens insurance platform will be used as a common platform and integrated with Pega BPM across both Aegon’s protection and closed book policies.

Atos has undertaken to transfer all of the closed block policies from Aegon’s two existing insurance platforms to Sapiens, and these will be transferred over the 24-month period following service transfer. The new Sapiens-based system will be hosted and maintained by Atos.

Aiming for Customer-Centric Operational Excellence

The third consideration is a commitment by Atos to implement customer-centric operational excellence. While Aegon had already begun to measure customer NPS and assess ways of improving the service, Atos has now begun to employ further the customer journey mapping techniques deployed in its Lytham center to identify customer effort and pain points. Use of the Sapiens platform will enable customer self-service and omni-channel service, while this and further automation will be used to facilitate the role of the agent and enhance the number of policies handled per FTE.

The contract is priced using the fairly traditional pricing mechanisms of a transition and conversion charge (£130m over a 3-year period) followed by a price per policy, with Atos aiming for efficiency savings of up to £30m per annum across the policy book.

Atos perceives that this service will become the foundation for a growing closed block L&P BPS business, with Atos challenging the incumbents such as TCS Diligenta, Capita, and HCL. Edinburgh will become Atos’ center of excellence for closed book L&P BPS, with Atos looking to differentiate from existing service providers by offering an onshore-based alternative with the digital platform and infrastructure developed as part of the Aegon relationship, offered on a multi-client basis. Accordingly, Atos will be increasingly targeting life & pensions companies, both first-time outsourcers and those with contracts coming up for renewal, as it seeks to build its U.K. closed book L&P BPS business.

]]>

Wipro has a long history of building operations platforms in support of shared services and has been evolving its Base))) platform for 10 years. The platform started life as a business process management (i.e. workflow) platform and includes Base))) Core, a BPM platform in use within ~25 Wipro operations floors, and Base))) Prism, providing operational metrics.

These elements of Base))) are now complemented by Base))) Harmony, a SaaS-based process capture and documentation platform.

So why is this important? Essentially, Harmony is appropriate where major organizations are looking to stringently capture and document their processes across multiple SSCs to further harmonize or automate these processes. It is particularly suitable for use where:

- Organizations are looking to drive the journey to GBS and consolidate their SSCs into a smaller number of centers

- Multinationals are active acquirers and need to be able to standardize and integrate SSCs within acquired companies into their GBS operations

- BPS contracts are coming up for renewal

- Organizations are looking to shorten the time-consuming RPA assessment lifecycle.

Supporting Process Harmonization for SSC Consolidation & Acquisition

Harmony is most appropriate for multinational organizations with multiple SSCs looking to consolidate these further. It has been used in support of standardized process documentation, and library and version control, by major organizations in the manufacturing, telecoms and healthcare sectors.

In recent years, multinationals have typically been on a journey moving away from federated SSCs, each with their own highly customized processes, to a GBS model with more standardized processes. However, relatively few organizations have completed this journey and, typically, scope remains for further process standardization and consolidation. This situation is often exacerbated by a constant stream of acquisitions and the need to integrate the operations of acquired companies. Many multinationals are active acquirers and need to be able to standardize and integrate SSCs within acquired companies into their GBS operations as quickly and painlessly as possible.

Process documentation is a key element in this process standardization and consolidation. However, process documentation in the form of SOPs can often be a manual and time-consuming process suffering from a lack of governance and change & version control.

Harmony is a standalone SaaS platform for knowledge & process capture and harmonization that aims to address this issue. It supports process capture at the activity level, enabling process steps to be captured diagrammatically along with supporting detailed documentation, including attachments, video, and audio.

The documentation is highly codified, capturing the “why, what, who, & when” for each process in a structured form along with the possible actions for each process step, e.g. allocate, approve, or calculate, using the taxonomy developed in the MIT Process Handbook.

From a review perspective, Harmony also provides a view from the perspectives of data, roles, and systems for each process step, so that, for example, it is easy to identify which data, roles or systems are involved in each step. Similarly, the user can click on, say, a specific role to see which steps that role participates in. This assists in checking for process integrity, e.g. checking that a role entering data cannot also be an approver.

Wipro estimates that documentation of a complex process with ~300 pages of SOP takes 2-3 weeks, with documentation of a simple process such as receiving an invoice or onboarding an employee taking 2-3 days, and initial training in Harmony typically taking a couple of days.

Harmony also supports process change governance, notifying stakeholders when any process modifications are made.

Reports available include:

- SOPs, including process flows, and screenshots, which can be used for training purposes

- SLAs

- Project plans

- Role summaries

- Gap analysis, covering aspects such as SLAs and scope for automation.

Support for process harmonization and adoption of reference or “golden processes” are also key aspects of Harmony functionality. For example, it enables the equivalent processes in various countries or regions to be compared with each other, or with a reference process, identifying the process differences between regions. The initial reference process can then be updated as part of this review, adding best practices from country or regional activities.

Harmony also plays a role in best practice adoption, including within its process libraries a range of golden processes, principally in finance & accounting and human resources, which can be used to speed up process capture or to establish a reference process.

Facilitating Value Extraction from BPS Contract Renewals

Despite the lack of innovation experienced within many in-force F&A BPS contracts, the lack of robust process documentation across all centers can potentially be a major inhibitor to changing suppliers. Organizations often tend to stick with their incumbent since they are aware of the time and effort that was required for them to acquire process understanding and they are scared of the length and difficulty of transferring process knowledge to a new supplier.

Harmony can potentially assist organizations facing this dilemma in running more competitive sourcing exercises and increasing the level of business value achieved on contract renewal by baselining process maturity, identifying automation potential, and by providing a mechanism for training new associates more assuredly.

Harmony provides a single version of each SOP online. As well as maintaining a single version of the truth, this assists organizations in training associates (with a new associate able to select just the appropriate section of a large SOP, relating to their specific activity, online).

Shortening the RPA Process Assessment Lifecycle

As organizations increasingly seek to automate processes, a key element in Harmony is its “botmap” module. Two of the challenges faced by organizations in adopting automation are the need for manual process knowledge capture and the discrepancies that often arise between out-of-date SOPs and associate practice. This typically leads to a 4-week process capture and documentation period at the front-end of the typically 12-week RPA assessment and implementation lifecycle.

Harmony can potentially assist in shortening, and reducing the cost of, these automation initiatives by eliminating much of the first 4 weeks of this 12-week RPA assessment and implementation lifecycle. It does this by recommending process steps with a high potential for automation. These recommendations are based on an algorithm that takes into account parameters such as the nature of the process step, the sequence of activities, the number of FTEs, the systems used, and the repeatability of the process. The resulting process recommendations assist the RPA business analyst in identifying the most appropriate areas for automation while also providing them with an up-to-date, more reliable, source of process documentation.

]]>

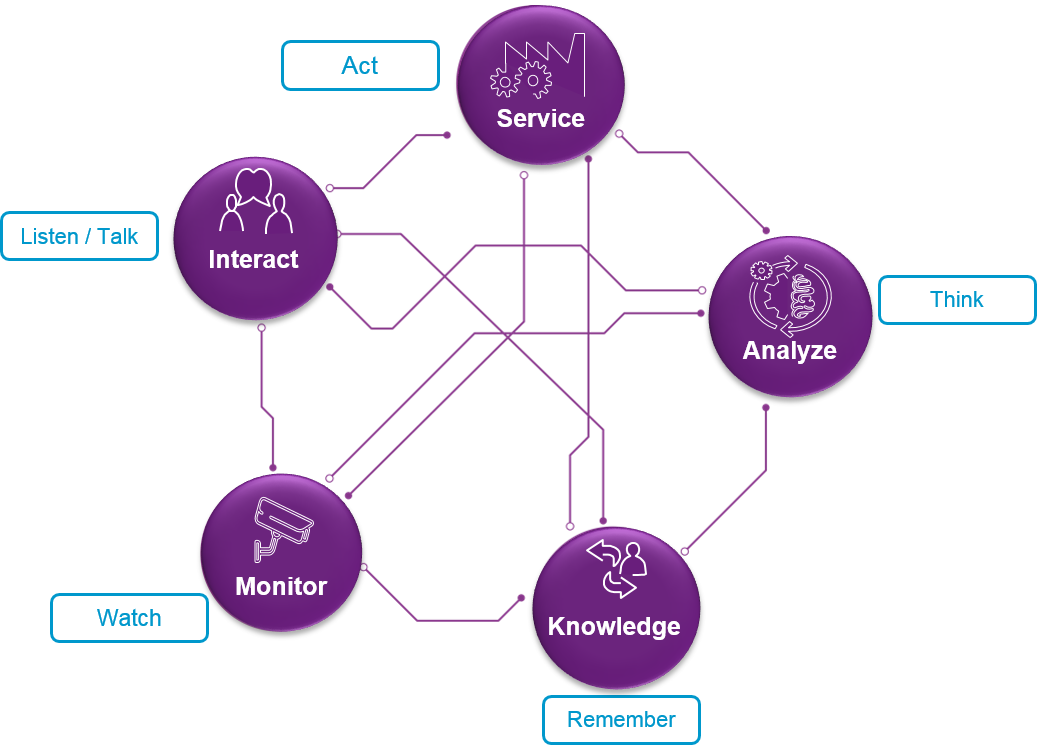

Capgemini's Carole Murphy

Capgemini has recently redefined its framework for Intelligent Automation, taking an approach based on ‘five senses’. I caught up with Carole Murphy, Capgemini’s Head of BPO Business Transformation Services, to identify how their new approach to Intelligent Automation is being applied to the finance & accounting function.

JW: What is Capgemini’s approach to Intelligent Automation and how does it apply to finance & accounting?

CM: Capgemini is using a ‘five senses’ model to help explain Intelligent Automation to our clients and to act as a design framework in developing new solutions. The ‘five senses’ are:

- Monitor (watch): in F&A, for example, monitoring of KPI dashboards, daily cash in monitoring, customer dispute monitoring, etc.

- Interact (listen/talk): interacting with users, customers, and vendors, using a range of channels including virtual agents

- Service (act): automated execution, for example using RPA to address for example cash reconciliation, automated creation of sales invoices, exceptions handling

- Analyze (think): providing business analytics in support of P&L and cash flow and root cause analysis in support of process effectiveness and efficiency

- Manage knowledge (remember): a knowledge base covering SOPs, collection strategies, bank reconciliation rules etc.

Capgemini's 'Five Senses' IA model

JW: Why is this approach important?

CM: It changes the fundamental nature of finance operations from reactive to proactive. Historically, within BPS contracts, the vast majority of the process has been in ‘act’ mode, supplemented by a certain amount of analytics. The introduction of Intelligent Automation enables us to move to a much more rounded and proactive approach. For example, a finance organization can now identify what is missing before it starts to cause problems for the organization. In accounts payable, for example, Intelligent Automation enables an organization to anticipate utility bills, and proactively identify any missing or unsent invoices before, say, a key facility is switched off. Similarly, in support of R2R, transactions can be monitored throughout the month and the organization can anticipate their impact on P&L well in advance of the formal month-end close. In summary, Intelligent Automation enables ongoing monitoring and analysis rather than periodic analysis and can incorporate real-time alerts, such as to identify a missing invoice and find out why it wasn’t raised. So, a much more informed and proactive approach to operations.

JW: And what impact does IA have on the roles of the people within the finance function?

CM: Intelligent Automation lifts both the role of the finance function within the organization and those of the individuals within the finance function. One of the benefits of Intelligent Automation is that it improves how we share and deploy rules and knowledge throughout the organization – making compliance more accessible and enabling colleagues to understand how to make good financial decisions. Complex queries can still be escalated but simple questions can be captured and resolved by the ‘knowbot’. This can change how we train and continually develop our people and how we interact across the organization.

JW: So how should organizations approach implementing Intelligent Automation within their finance & accounting functions?

CM: One of the exciting elements of the new technology is that it is designed for users to be able to implement more quickly and easily – it’s all about being agile. Alongside implementing point solutions, the value will ultimately be how to combine the senses to bring the best out of people and technology.

We can re-think and re-imagine how we work. Traditionally, we have organized work in sequential process steps – using the 5 senses and intelligent automation we can reconfigure processes, technology and human intervention in a much more inter-connected manner with constant interaction taking place between the ‘five senses’ discussed earlier.

This means that it’s important to reimagine traditional finance & accounting processes and fundamentally change the level of ambition for the finance function. So, for example, the finance function can now start to have much more impact on the top line, such as by avoiding leakage due to missing orders and missing payments. Here, Intelligent Automation can monitor all transactions and identify any that appear to be missing. Similarly, it’s possible to implement ‘fraud bots’ to identify, for example, duplicate invoices or payments to give much greater levels of insight and control than available traditionally.

JW: What does this involve?

CM: There are lots of ways to start engaging with the technology – we see a ‘virtuous cycle’ that follows the following steps:

- Refresh: benchmarking of the finance function

- Reimagine: workshops involving design thinking, innovation lab, and Intelligent Automation framework to identify the ‘art-of-the-possible’

- Reengineer: incorporating eSOAR methodology and Digital GEM

- Roll-out: here it’s very important to take a very agile development approach, typically using preconfigured prototypes, templates, and tested platforms

- Run: including 24/7 monitoring.

Reimagining the F&A processes in the light of Intelligent Automation, rather than automating existing processes largely ‘as-is’ is especially important. In particular, it’s important to eliminate, rather than automate, any ‘unnecessary’ process steps. Here, Capgemini’s eSOAR approach is particularly important and covers:

- Eliminate: removing wasteful or unnecessary activities

- Standardize processes

- Optimize ERPs, workflow, and existing IT landscape

- Automate: using best-of-breed tools

- Robotics: robotizing repetitive and rule-based transactions.

Finally, it’s critical not to be scared of the new technology and possibilities. The return on investment is incredible and the initial returns can be used to fund downstream transformation. At the same time, the cost, and timescale, of failure is relatively low. So, it’s important for finance organizations to start applying Intelligent Automation to get first-mover advantage rather than just watch and wait.

JW: Thank you very much, Carole. That certainly ties in with current NelsonHall thinking and will really help our readership. NelsonHall is increasingly being asked by our clients what constitutes next generation services and what is the art-of-the-possible in terms of new digital process models. And finance and accounting is at the forefront of these developments. Certainly, design thinking is an important element in assisting organizations to rethink both accounting processes and how the finance function can make a greater contribution to the wider enterprise – and the ‘five senses’ approach helps to demystify Intelligent Automation by clarifying the roles of the various technologies such as RPA for process execution, analytics for root cause analysis, and knowledge bases for process knowledge.

]]>

As well as conducting extensive research into RPA and AI, NelsonHall is also chairing international conferences on the subject. In July, we chaired SSON’s second RPA in Shared Services Summit in Chicago, and we will also be chairing SSON’s third RPA in Shared Services Summit in Braselton, Georgia on 1st to 2nd December. In the build-up to the December event we thought we would share some of our insights into rolling out RPA. These topics were the subject of much discussion in Chicago earlier this year and are likely to be the subject of further in-depth discussion in Atlanta (Braselton).

This is the third and final blog in a series presenting key guidelines for organizations embarking on an RPA project, covering project preparation, implementation, support, and management. Here I take a look at the stages of deployment, from pilot development, through design & build, to production, maintenance, and support.

Piloting & deployment – it’s all about the business

When developing pilots, it’s important to recognize that the organization is addressing a business problem and not just applying a technology. Accordingly, organizations should consider how they can make a process better and achieve service delivery innovation, and not just service delivery automation, before they proceed. One framework that can be used in analyzing business processes is the ‘eliminate/simplify/standardize/automate’ approach.

While organizations will probably want to start with some simple and relatively modest RPA pilots to gain quick wins and acceptance of RPA within the organization (and we would recommend that they do so), it is important as the use of RPA matures to consider redesigning and standardizing processes to achieve maximum benefit. So begin with simple manual processes for quick wins, followed by more extensive mapping and reengineering of processes. Indeed, one approach often taken by organizations is to insert robotics and then use the metrics available from robotics to better understand how to reengineer processes downstream.

For early pilots, pick processes where the business unit is willing to take a ‘test & learn’ approach, and live with any need to refine the initial application of RPA. Some level of experimentation and calculated risk taking is OK – it helps the developers to improve their understanding of what can and cannot be achieved from the application of RPA. Also, quality increases over time, so in the medium term, organizations should increasingly consider batch automation rather than in-line automation, and think about tool suites and not just RPA.

Communication remains important throughout, and the organization should be extremely transparent about any pilots taking place. RPA does require a strong emphasis on, and appetite for, management of change. In terms of effectiveness of communication and clarifying the nature of RPA pilots and deployments, proof-of-concept videos generally work a lot better than the written or spoken word.

Bot testing is also important, and organizations have found that bot testing is different from waterfall UAT. Ideally, bots should be tested using a copy of the production environment.

Access to applications is potentially a major hurdle, with organizations needing to establish virtual employees as a new category of employee and give the appropriate virtual user ID access to all applications that require a user ID. The IT function must be extensively involved at this stage to agree access to applications and data. In particular, they may be concerned about the manner of storage of passwords. What’s more, IT personnel are likely to know about the vagaries of the IT landscape that are unknown to operations personnel!

Reporting, contingency & change management key to RPA production

At the production stage, it is important to implement a RPA reporting tool to:

- Monitor how the bots are performing

- Provide an executive dashboard with one version of the truth

- Ensure high license utilization.

There is also a need for contingency planning to cover situations where something goes wrong and work is not allocated to bots. Contingency plans may include co-locating a bot support person or team with operations personnel.

The organization also needs to decide which part of the organization will be responsible for bot scheduling. This can either be overseen by the IT department or, more likely, the operations team can take responsibility for scheduling both personnel and bots. Overall bot monitoring, on the other hand, will probably be carried out centrally.

It remains common practice, though not universal, for RPA software vendors to charge on the basis of the number of bot licenses. Accordingly, since an individual bot license can be used in support of any of the processes automated by the organization, organizations may wish to centralize an element of their bot scheduling to optimize bot license utilization.

At the production stage, liaison with application owners is very important to proactively identify changes in functionality that may impact bot operation, so that these can be addressed in advance. Maintenance is often centralized as part of the automation CoE.

Find out more at the SSON RPA in Shared Services Summit, 1st to 2nd December

NelsonHall will be chairing the third SSON RPA in Shared Services Summit in Braselton, Georgia on 1st to 2nd December, and will share further insights into RPA, including hand-outs of our RPA Operating Model Guidelines. You can register for the summit here.

Also, if you would like to find out more about NelsonHall’s expensive program of RPA & AI research, and get involved, please contact Guy Saunders.

Plus, buy-side organizations can get involved with NelsonHall’s Buyer Intelligence Group (BIG), a buy-side only community which runs regular webinars on RPA, with your buy-side peers sharing their RPA experiences. To find out more, contact Matthaus Davies.

This is the final blog in a three-part series. See also:

Part 1: How to Lay the Foundations for a Successful RPA Project

]]>

As well as conducting extensive research into RPA and AI, NelsonHall is also chairing international conferences on the subject. In July, we chaired SSON’s second RPA in Shared Services Summit in Chicago, and we will also be chairing SSON’s third RPA in Shared Services Summit in Braselton, Georgia on 1st to 2nd December. In the build-up to the December event we thought we would share some of our insights into rolling out RPA. These topics were the subject of much discussion in Chicago earlier this year and are likely to be the subject of further in-depth discussion in Atlanta (Braselton).

This is the second in a series of blogs presenting key guidelines for organizations embarking on an RPA project, covering project preparation, implementation, support, and management. Here I take a look at how to assess and prioritize RPA opportunities prior to project deployment.

Prioritize opportunities for quick wins

An enterprise level governance committee should be involved in the assessment and prioritization of RPA opportunities, and this committee needs to establish a formal framework for project/opportunity selection. For example, a simple but effective framework is to evaluate opportunities based on their:

- Potential business impact, including RoI and FTE savings

- Level of difficulty (preferably low)

- Sponsorship level (preferably high).

The business units should be involved in the generation of ideas for the application of RPA, and these ideas can be compiled in a collaboration system such as SharePoint prior to their review by global process owners and subsequent evaluation by the assessment committee. The aim is to select projects that have a high business impact and high sponsorship level but are relatively easy to implement. As is usual when undertaking new initiatives or using new technologies, aim to get some quick wins and start at the easy end of the project spectrum.

However, organizations also recognize that even those ideas and suggestions that have been rejected for RPA are useful in identifying process pain points, and one suggestion is to pass these ideas to the wider business improvement or reengineering group to investigate alternative approaches to process improvement.

Target stable processes

Other considerations that need to be taken into account include the level of stability of processes and their underlying applications. Clearly, basic RPA does not readily adapt to significant process change, and so, to avoid excessive levels of maintenance, organizations should only choose relatively stable processes based on a stable application infrastructure. Processes that are subject to high levels of change are not appropriate candidates for the application of RPA.

Equally, it is important that the RPA implementers have permission to access the required applications from the application owners, who can initially have major concerns about security, and that the RPA implementers understand any peculiarities of the applications and know about any upgrades or modifications planned.

The importance of IT involvement

It is important that the IT organization is involved, as their knowledge of the application operating infrastructure and any forthcoming changes to applications and infrastructure need to be taken into account at this stage. In particular, it is important to involve identity and access management teams in assessments.

Also, the IT department may well take the lead in establishing RPA security and infrastructure operations. Other key decisions that require strong involvement of the IT organization include:

- Identity security

- Ownership of bots

- Ticketing & support

- Selection of RPA reporting tool.

Find out more at the SSON RPA in Shared Services Summit, 1st to 2nd December

NelsonHall will be chairing the third SSON RPA in Shared Services Summit in Braselton, Georgia on 1st to 2nd December, and will share further insights into RPA, including hand-outs of our RPA Operating Model Guidelines. You can register for the summit here.

Also, if you would like to find out more about NelsonHall’s expensive program of RPA & AI research, and get involved, please contact Guy Saunders.

Plus, buy-side organizations can get involved with NelsonHall’s Buyer Intelligence Group (BIG), a buy-side only community which runs regular webinars on sourcing topics, including the impact of RPA. The next RPA webinar will be held later this month: to find out more, contact Guy Saunders.

In the third blog in the series, I will look at deploying an RPA project, from developing pilots, through design & build, to production, maintenance, and support.

]]>

As well as conducting extensive research into RPA and AI, NelsonHall is also chairing international conferences on the subject. In July, we chaired SSON’s second RPA in Shared Services Summit in Chicago, and we will also be chairing SSON’s third RPA in Shared Services Summit in Braselton, Georgia on 1st to 2nd December. In the build-up to the December event we thought we would share some of our insights into rolling out RPA. These topics were the subject of much discussion in Chicago earlier this year and are likely to be the subject of further in-depth discussion in Atlanta (Braselton).

This is the first in a series of blogs presenting key guidelines for organizations embarking on RPA, covering establishing the RPA framework, RPA implementation, support, and management. First up, I take a look at how to prepare for an RPA initiative, including establishing the plans and frameworks needed to lay the foundations for a successful project.

Getting started – communication is key

Essential action items for organizations prior to embarking on their first RPA project are:

- Preparing a communication plan

- Establishing a governance framework

- Establishing a RPA center-of-excellence

- Establishing a framework for allocation of IDs to bots.

Communication is key to ensuring that use of RPA is accepted by both executives and staff alike, with stakeholder management critical. At the enterprise level, the RPA/automation steering committee may involve:

- COOs of the businesses

- Enterprise CIO.

Start with awareness training to get support from departments and C-level executives. Senior leader support is key to adoption. Videos demonstrating RPA are potentially much more effective than written papers at this stage. Important considerations to address with executives include:

- How much control am I going to lose?

- How will use of RPA impact my staff?

- How/how much will my department be charged?

When communicating to staff, remember to:

- Differentiate between value-added and non value-added activity

- Communicate the intention to use RPA as a development opportunity for personnel. Stress that RPA will be used to facilitate growth, to do more with the same number of people, and give people developmental opportunities

- Use the same group of people to prepare all communications, to ensure consistency of messaging.

Establish a central governance process

It is important to establish a strong central governance process to ensure standardization across the enterprise, and to ensure that the enterprise is prioritizing the right opportunities. It is also important that IT is informed of, and represented within, the governance process.

An example of a robotics and automation governance framework established by one organization was to form:

- An enterprise robotics council, responsible for the scope and direction of the program, together with setting targets for efficiency and outcomes

- A business unit governance council, responsible for prioritizing RPA projects across departments and business units

- A RPA technical council, responsible for RPA design standards, best practice guidelines, and principles.

Avoid RPA silos – create a centre of excellence

RPA is a key strategic enabler, so use of RPA needs to be embedded in the organization rather than siloed. Accordingly, the organization should consider establishing a RPA center of excellence, encompassing:

- A centralized RPA & tool technology evaluation group. It is important not to assume that a single RPA tool will be suitable for all purposes and also to recognize that ultimately a wider toolset will be required, encompassing not only RPA technology but also technologies in areas such as OCR, NLP, machine learning, etc.

- A best practice for establishing standards such as naming standards to be applied in RPA across processes and business units

- An automation lead for each tower, to manage the RPA project pipeline and priorities for that tower

- IT liaison personnel.

Establish a bot ID framework

While establishing a framework for allocation of IDs to bots may seem trivial, it has proven not to be so for many organizations where, for example, including ‘virtual workers’ in the HR system has proved insurmountable. In some instances, organizations have resorted to basing bot IDs on the IDs of the bot developer as a short-term fix, but this approach is far from ideal in the long-term.

Organizations should also make centralized decisions about bot license procurement, and here the IT department which has experience in software selection and purchasing should be involved. In particular, the IT department may be able to play a substantial role in RPA software procurement/negotiation.

Find out more at the SSON RPA in Shared Services Summit, 1st to 2nd December

NelsonHall will be chairing the third SSON RPA in Shared Services Summit in Braselton, Georgia on 1st to 2nd December, and will share further insights into RPA, including hand-outs of our RPA Operating Model Guidelines. You can register for the summit here.

Also, if you would like to find out more about NelsonHall’s extensive program of RPA & AI research, and get involved, please contact Guy Saunders.

Plus, buy-side organizations can get involved with NelsonHall’s Buyer Intelligence Group (BIG), a buy-side only community which runs regular webinars on sourcing topics, including the impact of RPA. The next RPA webinar will be held in November: to find out more, contact Matthaus Davies.

In the second blog in this series, I will look at RPA need assessment and opportunity identification prior to project deployment.

]]>

Within its Lean Digital approach, Genpact is using digital and design thinking (DT) to assist organizations in identifying and addressing what is possible rather than just aiming to match current best-in-class, a concept now made passé by new market entrants.

At a recent event hosted at Genpact’s new center in Palo Alto, one client speaker described Genpact’s approach to DT. The company, a global consumer goods giant, had set up a separate unit within its large and mature GBS organization with a remit to identify major disruptions - with a big emphasis on “major”. It set a target of 10x improvement (rather than, say 30%) to ensure thinking differently about activities, in order to achieve major changes in approach, not simply incremental improvements within existing process frameworks. The company already had mature best-of-breed processes and was being told by shared service consultants that the GBS organization merely needed to continue to apply more technology to existing order management processes. However, the company perceived a need to “do over” its processes to target fundamental and 10x improvements rather than continue to enhance the status quo.

The establishment of a separate entity within the GBS organization to target this level of improvement was important in order to put personnel into a psychological safety zone separated from the influence of existing operations experts, existing process perceived wisdom, and a tendency to be satisfied with incremental change. The unit then mapped out 160 processes and screened them for disruption potential, using two criteria to identify potential candidates:

- Are relevant disruptive technologies available and sufficiently mature now? Technologies ruled out at this stage included IoT and virtual customer service agents (the latter because they felt a 1% error rate was unacceptable in a commercial process)

- Does the company have the will to disrupt the process?

The exercise identified five initial areas for disruption with one of these being order management.

On order management, the company then sought external input from an organization that could contribute both subject matter expertise and DT capability. And Genpact, not an existing supplier to the GBS organization for order management, provided a team of 5-10 dedicated personnel supported by a supplementary team of ~30 personnel.

The team undertook an initial workshop of 2-3 days followed by a 6-8 week design thinking and envisioning journey. The key principles here were “to fall in love with the problem, not the solution”, with the client perceiving many DT consultancies as being too ready to lock-in to a (preferred) solution too early in the DT exercise, and to use creative inputs, not experts. In this case, personnel with experience in STP in capital markets were introduced in support of generating new thinking, and it was five weeks into the DT exercise before the client’s team was introduced to possible technologies.

This DT exercise identified two fundamental principles for changing the nature of order management:

- “No orders/no borders”, questioning the idea of whether an order was really necessary, instead viewing order management as a data problem, one that involves identifying the timing of replenishment based on various signals including those from retailers

- The concept of the order management agent as an ‘orchestrator’ rather than a ‘doer’, with algorithms being used for basic ‘information directing’ rather than agents.

This company identified the key criteria for selecting a design thinking partner to be a service provider that:

- Has the courage (and insight) to disrupt themselves and destroy their own revenue

- Will spend a long time on the problem and not force a favored solution.

Genpact claims to be ready to cannibalize its own revenue (as do, indeed, all BPS providers we have spoken to – the expected quid pro quo being that the client outsources other activities to them). However, in this example, the order management “agents” being disrupted consist of 200-300 in-house client FTEs and 400-500 FTEs from other BPS service providers, so there is no immediate threat to Genpact revenues.

The Real Impact of RPA/AI is Still Some Way Off

Clearly the application of digital, RPA and AI technologies is going to have a significant impact on the nature of BPS vendor revenues in future, and, of course, on commercial models. However, at present, the level of revenue disruption facing BPS vendors is being limited by:

- Organizations typically seeking 6%-7% cost reduction per annum, rather than higher, truly disruptive, targets

- Genpact’s own estimate that ~80% of clients currently reject disruptive value propositions.

Nonetheless, organizations are showing considerable interest in concepts such as Lean Digital. Genpact CEO ‘Tiger’ Tyagarajan says he has been involved in 79 CEO meetings (to discuss digital process transformation/disruptive propositions as a result of the company’s lean digital positioning) in 2016 compared to fewer than 10 CEO meetings in the previous 11 years.

Order Management an Activity Where Major Disruption Will Occur

Finally, this example (one of several that we have seen) illustrates that order management, which tends to have significant manual processing and to be client or industry-specific, is becoming a major target for the disruptive application of new digital technologies.

**********************

See also Genpact Combining Design Thinking & Digital Technologies to Generate Digital Asset Utilities by Rachael Stormonth, published this week here.

]]>Sector domain focus

While Infosys is taking a horizontal approach to taking emerging technologies to the next level, WNS regards sector domain expertise as its key differentiator. Accordingly, while Infosys has moved delivery into a separate horizontal delivery organization, WNS continues to organize by vertical across both sales and delivery and looks to offer its employees careers as industry domain experts – it views its personnel as being sector experts and not just experts in a particular horizontal.

The differing philosophies of the two firms are also reflected in their approach to technology. WNS brought in a CTO nine months ago and now has a technology services organization. But where Infosys is building tools that are applicable cross-domain, WNS is building platforms and BPaaS solutions that address specific pain points within targeted sectors.

Location strategy

And while both firms are seeking (like all service providers) to achieve non-linear revenue growth, they have markedly different location strategies. Infosys is placing its bets on technology and largely leaving its delivery footprint unchanged, whereas WNS is increasingly taking its delivery network to tier 2 cities, not just in India but also within North America, Eastern Europe and South Africa, to continue to combine the cost benefits of labor arbitrage with those of technology. This dual approach should assist WNS in providing greater price-competitiveness and protection for its margins in the face of industry-wide pressure from clients for enhanced productivity improvement. Despite the industry-wide focus and investment on automation, the levels of roll-out of automation across the industry have typically been insufficient to outstrip pricing declines to generate non-linear revenue growth, and will remain so in the short-term, so location strategy still has a role to play.

At the same time, WNS is fighting hard for the best talent within India. For example, the company:

- Is taking part in a marketing campaign in India to persuade leading graduates (and their families), who may have increasingly been thinking that the BPS industry was not for them, that they can build an exciting career in BPS as domain experts

- Has announced a 2-year “MBA in Business Analytics” program in India developed in conjunction with NIIT. The program is aimed at mathematically strong graduates with 3-4 years of experience, who spend their first year in training, and their second year working on assignments with WNS. The program is delivering 120 personnel to WNS.

Industry domain credentials & technology strategy

Like a number of its competitors, WNS is increasingly focused on assisting organizations in adopting advanced digital business models that will offer them protection “not just from existing competitors but from competitors that don’t yet exist”. In particular, WNS is strengthening its positioning both in verticals where it is well established, such as insurance and the travel sector, and also in newer target sectors such as utilities, shipping & logistics, and healthcare, aiming to differentiate both with domain-specific technology and with domain-specific people. The domain focus of its personnel is underpinned not just by its organizational structure but also, for example, by the adoption of domain universities.

Accordingly, WNS is investing in digital frameworks, AI models, and “assisting clients in achieving the art of the possible” but within a strongly domain-centric framework. WNS’ overall technology strategy is strongly focused on domain IP, and combining this domain IP with analytics and RPA. WNS sees analytics as key; it has won a number of recent engagements leading with analytics, and is embedding analytics into its horizontal and vertical solutions as well as offering analytics services on a standalone basis. It currently has ~2,500 FTEs deployed on research and analytics, of whom ~1,600 are engaged on “pure” analytics.

But the overriding theme for WNS within its target domains is a strong focus on domain-specific platforms and BPaaS offerings, specifically platforms that digitalize and alleviate the pain points left behind by the traditional industry solutions, and this approach is being particularly strongly applied by WNS in the travel and insurance sectors.

In the travel sector, WNS offers platforms, often combined with RPA and analytics, in support of:

- Revenue recovery, through its Verifare fare audit platform

- Disruption management, through its RePax platform

- Proration, through its SmartPro platform.

It also offers a RPA-based solution in support of fulfilment, and Qbay in support of workflow management.

In addition, WNS is making bigger bets in the travel sector, investing in larger platform suites in the form of its commercial planning suite, including analytics in support of sales, code shares, revenue management, and loyalty. The emphasis is on reducing revenue leakage for travel companies, and in assisting them in balancing enhanced customer experience with their own profitability.

The degree of impact sought from these platforms is shown by the fact that WNS views its travel sector platforms as having ~$30m revenue potential within three years, though the bulk of this is still expected to come from the established Verifare revenue recovery platform.

WNS’ platforms and BPaaS offerings for the insurance sector include:

- Claims eAdjudicator, a RPA-based solution based on Fusion for classifying incoming insurance claims into categories such as ‘no touch’, ‘light touch’, ‘high touch requiring attention of senior personnel’, and ‘potentially fraudulent’ by combining information from multiple sources. WNS estimates that this tool can deliver 70% reduction in support FTEs

- Broker Connect, a mobile app supporting broker self-service.

In addition, WNS offers two approaches to closed block policy servicing:

- InsurAce, a desktop aggregation tool supporting unified processing across the range of legacy platforms

- A BPaaS service based on the LIDP Titanium platform, which supports a wide range of life and annuity product types. This offering is also targeted at companies seeking to introduce new products, channels, or territories, and at spin-offs and start-ups.

The BPaaS service is underpinned by WNS’ ability to act as a TPA across all states in the U.S.

WNS’ vertical focus is not limited to traditional industry-specific processes. The company has also developed 10 industry-specific F&A services, with 50%+ industry-specific scope in F&A, with the domain-specific flavor principally concentrated within O2C.

In summary

Both Infosys and WNS are enhancing their technology and people capabilities with the aim of assisting organizations in implementing next generation digital business models. However, while Infosys is taking the horizontal route of developing new tools with cross-domain applicability and encouraging staff development via design thinking, WNS’ approach is strongly vertical centric, developing domain-specific platforms and personnel with strong vertical knowledge and loyalties. So, two different approaches and differing trajectories, but with the same goal and no single winning route.

]]>Well, the use of workflow and platforms to surround and supplement the client’s core systems has been well-established for a period of years. BPO has worked relatively well in these environments. The vendors largely have process models and roadmaps in place for the principal process areas. However, continuous improvement has been somewhat spasmodic in the past, since analytics and lean six sigma projects have tended to be carried out as one-off exercises rather than ongoing programs of activity, and the investment hurdles for further automation in support of process improvement have often been too high to make the process improvements identified readily realizable. This is now starting to change.

Firstly, RPA now provides a mechanism, at present largely restricted to rule-based processes, whereby process improvements identified through lean exercises can now be realized at very low levels of investment and accordingly address areas involving small numbers of FTEs and not just major process areas. This, however, is largely a short-term one-off hit, with most vendors likely to have applied RPA reasonably fully to their major contracts at least by the end of 2016.

The next form of automation within BPO, at a higher level of investment, is the use of BPaaS to address sub-processes where fundamental change is required. These BPaaS implementations will incorporate best-practice processes, and increasingly incorporate analytics and elements of self-learning to ensure that process adjustments are made on a more ongoing basis than in earlier forms of BPO.

The present form of RPA is largely a one-off cost reduction measure in the same way that offshoring is a one-off cost reduction measure. The natural progression from this automation of rule-based transactional process is increased automation of judgment-based processes. This automation of judgment-based processes is still in its infancy and will frequently be used initially to support agent judgments, recommending next courses of action to be taken. This use of AI will move the focus of automation much more into sales and service in the front-office. However, while some of these technologies are beginning to handle natural language processing in text form, further improvements in voice recognition are still required.

In the middle-office, the major transformation in BPO will come from the IoT which has the potential to fundamentally change the nature of service delivery and the value driven by BPO. Arguably, Uber is a form of IoT-based service based on GPS technology. IoT will, however, drive equally fundamental changes in service delivery in areas such as insurance (where it has already started to appear in support of auto insurance), healthcare and telemedicine, and home monitoring services.

]]>RPA is essentially execution of repeatable, rule-based tasks which require little or no cognition or human expertise or human intervention (though RPA can be used to support agents within relatively hybrid tasks) by a bot mimicking human action. The bot operates enterprise software and applications through existing user interfaces based on pre-defined rules and inputs and is best suited to relatively heavy-duty transaction processing.

The next stage is to complement RPA with newer technologies such as AI where judgment-based tasks are starting to be supported with cognitive platforms. Examples of cognitive technologies include adaptive learning, speech recognition, natural language processing, and pattern identification algorithms. While RPA has typically been in full-swing for about two years and is currently reaching its peak of roll-out, cognitive technologies typically won’t reach wide-scale adoption for another few years. When they do, they promise to have most impact not in data-centric transactional processing activities but around unstructured sales and customer service content and processes.

O.K., so what about BPaaS?

Typically, BPaaS consists of a platform hosted by the vendor, ideally on a one-to-many basis, similarly to SaaS, complemented by operations personnel. These BPaaS platforms have been around for some time in areas such as finance & accounting in the form of systems of engagement surrounding core systems such as ERPs. In this context it is common for ERPs to be supplemented by specialist systems of engagement in support of processes such as order-to-cash and record-to-report. However, initially these implementations tended to be client-specific and one-to-one rather than one-to-many and true BPaaS.

Indeed, BPaaS remains a major trend within finance & accounting. While only start-ups and spin-offs seem likely to use BPaaS to support their full finance & accounting operations in the short-term, suppliers are increasingly spinning off individual towers such as accounts payable in BPaaS form.

However, where BPaaS is arguably coming into its own is in the form of systems of engagement (SoE) to tackle particular pain points and a number of vendors are developing systems of engagement that can be embedded with analytics to provide packaged, typically BPaaS, services. These systems of engagement and BPaaS services are sitting on top of systems of record in the form of, for example, core banking platforms or ERPs to tackle very specific pain points. Examples in the BFSI sector that are becoming increasingly common are BPaaS services around mortgage origination and KYC. Other areas currently being tackled by BPaaS include wealth management and triage around property & casualty underwriting.

In the same way that systems of engagement are currently required in the back-office to support ERPs, systems of engagement are starting to emerge in the front-office to provide a single view of the customer and to recommend “next best actions” both to agents and, increasingly, direct to consumers via digital channels. While automation in BPO in the form of BPaaS and systems of engagement is still largely centered in the back-office and beginning to be implemented in the middle-office, in future this approach and the more advanced forms of automation will be highly important in supporting sales and service in the front-office.

This change in BPaaS strategy from looking to replace core systems to providing systems of engagement surrounding the core systems offers a number of benefits, namely:

- It directly tackles the subject of improving specific KPIs and sub-processes within the organization in a focused and manageable manner, one at a time

- Secondly it offers the potential when combined with analytics to build an element of ongoing process improvement and learning directly into the sub-processes concerned

- Thirdly, it enables a modern digital interface to be implemented on top of the systems of record in support of agents, customers, suppliers, and employees

- Finally, it avoids the need to replace legacy systems on a wholesale basis and can be used to introduce transactional and gainshare pricing rather than licence and FTE-based pricing.

I started with the question “where does automation in BPO go from here?” In summary, BPO automation will only take a significant step forward when RPA becomes AI, and BPaaS emerges from the back-office to support sales and service in the front-office.

]]>These early transformations were also frequently seen by both client and provider as a foundation for utility services, often with the client retaining a stake in the resulting joint venture. So talk of automation and utility services is not new. However, the success rate of these early projects was limited, often being impeded by the challenge, and timescales, of technology implementation and the frequent over-tailoring of initial technology implementations to the client despite the goal of establishing a wider multi-client utility.

So where do we stand with automation today and will it succeed this time around? Let’s focus on the three overriding topics of the moment in the application of automation to BPO: robotics process automation (RPA), analytics, and BPaaS.