The U.K. government has just released its AI Opportunities Action Plan and response, which includes 50 recommendations to boost the use of AI. Watch the video for extended highlights, but here’s a brief summary:

Adopting AI talent

Universities will work with industry to create new AI courses and reskill workers for AI-related jobs, with the high-potential individual visa being expanded to attract top talent from abroad.

Availability of data to train AI models

A National Data Library will be established, making high-potential public data sets available to AI researchers and innovators. All public sector projects will be exposed through APIs to the private sector, if deemed appropriate.

Supporting & scaling AI adoption

A good proportion of AI-related projects fail to scale, so the action plan has identified several recommendations to support organizations in the public sector in identifying and scaling opportunities for AI. This includes:

- Appointing AI sector champions in key industries to work with the government in developing AI adoption plans

- A faster, multi-stage AI procurement process to streamline the funding of projects and pilot projects with stricter controls on larger investments.

- The creation of a U.K. Sovereign AI body to partner with private sector companies investing in AI ventures and adopting an AI scan, pilot and scale approach to develop AI applications. Undoubtedly there is an opportunity to use technologies such as task and process mining to highlight areas of improvements that can benefit from AI. For an example, see my blog on task mining within the NHS: Harnessing Process Mining Data to Transform the NHS.

Investments in AI infrastructure

Recommendations include expanding the AI Research Resource (AIRR) to at least 20 times its capacity by 2030. The AIRR's expanded scale would allow it to run more AI research and mission-oriented clusters, each led by a program director for the likes of model training and establishing AI growth zones, with streamlined planning permission approvals to build the necessary data centers to support these investments.

Initiatives already in play include:

- The creation of an AI growth zone in Oxfordshire

- N-scale planning to build its first U.K. data center in Essex as part of a £1.2bn investment to scale up to 50MW in AI and high performance computing capacity

- Vantage building a large campus in Bridgend, Wales, offering up to 150MW in AI compute capacity over the next 10 to 15 years

- In mid-2024, Blackstone announcing its plan to create ten data centers in Northumberland as part of a £10bn investment

- Kyndryl announcing its plan to create up to 1k AI-related jobs in Liverpool over the next three years

- In December 2024, the government greenlighting a previously blocked 140MW Corscale data center in Buckinghamshire

- The government designating data centers as critical infrastructure that will benefit from relaxed planning regulations.

As soon as the individual strategies for the AI Opportunities Action Plan’s recommendations emerge, I'll be providing more detailed analysis.

]]>

In the U.K., the efficiency of the NHS, and particularly the reduction of waiting lists, continues to be a hot political topic. While promises of cash injections are met with skepticism, a recent event in London showcased how data-driven insights could help improve the NHS’ operational efficiency without the need for major financial outlays. As part of its 22-city tour, process mining vendor Celonis presented its vision for transformative applications within the NHS.

How Celonis can deliver NHS efficiencies

The Celonis process mining platform ingests process data from systems of record, for example CRM systems, and supports organizations in visualizing and analyzing process flows to identify opportunities for process efficiency gains.

A standout example at the event was the use of Celonis at University Hospitals Coventry and Warwickshire (UHCW). Collaborating with IBM, UHCW implemented Celonis to address inefficiencies and reduce waiting lists. While the hospital knew the issues it had, Dr Jacob Koris, one of the NHS’ representatives at the event and a fellow of the NHS’ Get It Right First Time initiatives, stated that the insights from Celonis helped uncover the data to guide process transformation programs. Here are some of the insights that were detected and what the trust has done to address specific issues:

Initial challenges

Challenges included:

- 6.3% of patients did not attend pre-booked treatments

- 21% canceled treatments less than five days before the appointment

- 7% of appointments were canceled by the hospital itself

Data-driven solution

Celonis was deployed, integrating patient booking data to help UHCW understand the underlying reasons why treatment appointments were missed or cancelled, as well as suggesting and monitoring process improvement plans.

The Celonis platform mapped out the process, which included patients initially receiving text message reminders the day or even evening before treatment. These late reminders were identified by the Celonis platform as a possible root cause for late cancellations from patients. UHCW conducted A/B testing to send reminders four days before scheduled treatments compared to the initial one day reminder, with Celonis tracking the testing.

This change reduced missed appointments from 10% to 4% and improved proactive communication from patients

Impact

The improved appointment management allowed the hospital to reallocate previously vacant slots to other patients.

UHCW reduced its waiting list from 72,000 to 67,000, one of the few trusts to achieve a waiting list reduction in the past year.

UHCW’s success extends beyond reducing missed appointments. For example:

- Cost savings and efficiency:

- £1.4 million in annual benefits were realized from short-term interventions

- 17,000 appointments were released by reducing wastage

- 700 more patients per week were accommodated without increasing staff numbers

- Operational improvements:

- Addressed low-value clinical time use caused by inappropriate referrals

- Enhanced hospital productivity by reducing high patient call volumes

- Improved patient experience by decreasing waiting times for treatments.

The broader picture

The success at UHCW has led to the adoption of Celonis by other NHS hospitals, including University Hospital Dorset and Dorset County Hospital. These hospitals are also seeing significant benefits. University Hospital Dorset achieved an annualized benefit of £1.8m, while Dorset County Hospital reported an annualized benefit of £1.1m.

IBM is further supporting these hospitals by deploying Watsonx GenAI in PoCs to enhance patient issue coding and automate the validation of clinical letters, reducing the need for human intervention at the hospitals.

Despite these successes, some NHS trusts and hospitals remain cautious due to past experiences with IT projects that promised but failed to deliver significant improvements. However, the tangible benefits realized by UHCW and other hospitals demonstrate the potential of tools like Celonis to not only replicate existing wins but also uncover new efficiencies.

For example, speaking to IBM at the event, the possibility of gainshare or other rewards-based contracting appeared to be a possibility as incentives for other hospitals. Also, at IBM’s recent partner event focused on GenAI, the company demonstrated a number of outcome-based contracts with other clients focused on process transformation using GenAI technologies.

Future use cases discussed by Dr Jacob Koris at the Celonis event include:

- Reducing the need for multiple hospital visits by scheduling all necessary tests on the same day

- Coordinating appointments for patients and their dependents to occur within the same appointment window.

Conclusion

The Celonis event in London highlighted how data-driven process improvements can help the NHS tackle long-standing issues such as waiting lists without the need for significant additional funding. And while the savings made do not go very far in terms of reducing the overall funding gap, the potential impact for more hospitals and more use cases leveraging technologies such as Celonis to help address inefficiencies is clear.

]]>

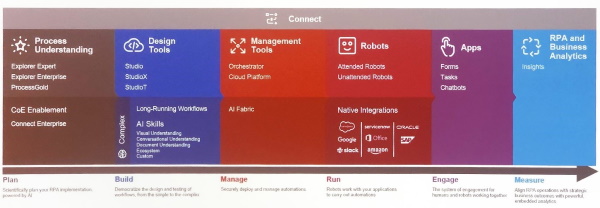

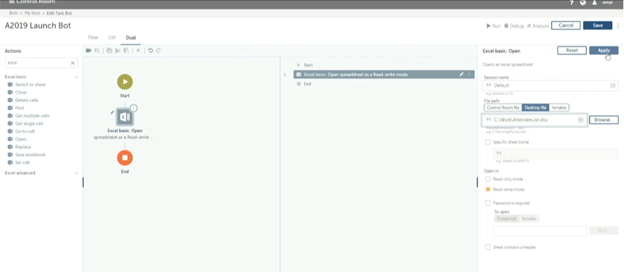

NelsonHall recently attended UiPath’s Forward VI event in Las Vegas, at which the company launched its Autopilot capabilities. While the company launched Autopilot across all its platform components, including Studio, Assistant, Apps, mining, and Test Manager, this blog focuses on its use within Studio and Assistant.

Autopilot for Studio

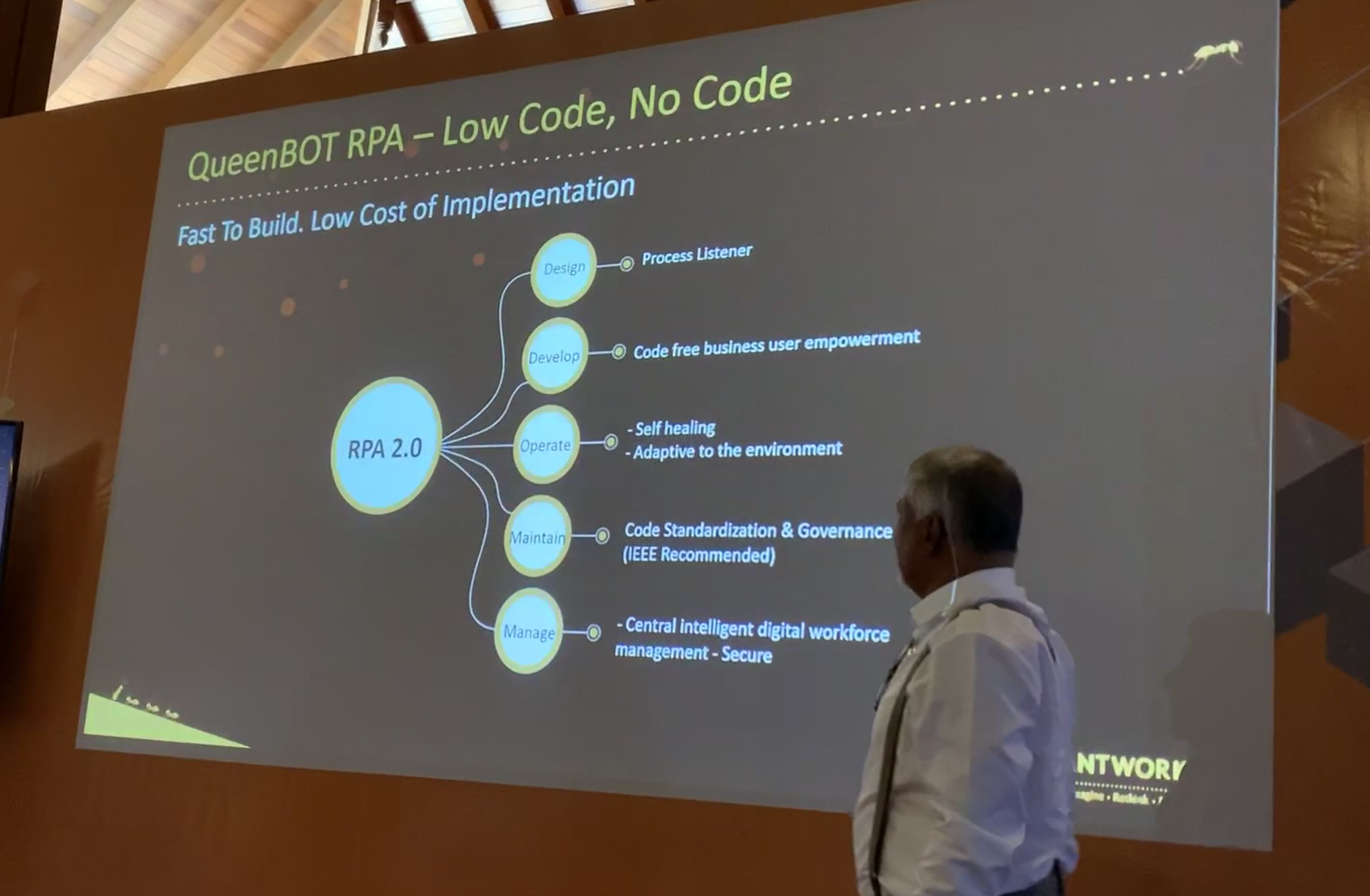

A constant inhibitor to automation has been the capacity of organizations to build the automations. In the early days, automation relied on skilled developers writing code to build the automation. Platform vendors such as UiPath sought to tackle this inhibitor with the launch of low- and no-code IDEs such as UiPath Studio. However, while that development launched this current wave of automation, companies were still hamstrung by the number of employees trained on these platforms who were able to deliver these results.

Seeking to increase the number of employees that could develop automations, each of the platform vendors focused on increasing support for citizen developers, with UiPath launching StudioX as a simplified version of Studio; indeed, at Forward VI, Deloitte announced that it is committing to having 10% of its 415k employees trained on UiPath as part of a citizen developer drive.

Still, these efforts have not been enough to overcome the capacity issues within clients. To address these issues, UiPath has launched Autopilot for Studio, embedding the capability for users to write requirements for bots in natural language text, from which Autopilot then generates an automation in Studio.

This will not only support citizen developers; experienced developers are often handed bots from citizen developers to ensure the bot is enterprise-grade. With Autopilot, we can expect that the quality of the citizen-developed bot is increased. The demonstrations of Autopilot at the event showed faster bot development than the typical automation developer.

Autopilot for Assistant

On the second day of Forward VI, we also saw a demo of Autopilot for Assistant. Assistant is the interface that lets desktop users see and run all the automations. For the most part, this interface has been selecting automations to run from a list of bots or leveraging the beta of Clipboard AI functionality to quickly and automatically copy information from one interface or image into fields within applications.

With the launch of Autopilot for Assistant, users can now interact through natural language with the interface. This chat interface allows Autopilot to find bots that can answer users’ requests and run desktop automations live on the machine.

Importantly, this is not where the magic ends: if a user asks for something that cannot be completed using existing bots, Autopilot can use the same intelligence used in Autopilot for Studio to create bots that can leverage connectors within Autopilot for Studio to accomplish the task, prompting the human user for confirmation along the way. If the process is successful and the user believes that this automation would be useful, they may add this to the automation hub along with an automatically generated description of the process and the steps performed so that developers can perform any ‘last mile’ work to build the bot, which can be referenced in the future.

In the demo, we saw Assistant being asked to connect with a specific person on LinkedIn. Autopilot interpreted the request, launched a browser, searched for the person, and before requesting the connection, asked the user to confirm the action. In this manner, desktop automation through UiPath assistant is less hamstrung by developers or even citizen developers.

How these developments compare to the competition

UiPath isn’t the first automation platform provider to launch a copilot for their IDEs: in May this year we saw Automation Anywhere launch its copilot capabilities, and last month, Microsoft showed its copilot for Power Automate; both strive to offer bot generation through natural language.

Where UiPath is ahead of the competition is in its secondary use case above, building bots on the fly to support digital assistants. This capability could not only boost the capability and quality of citizen developers, but reduce the need for them entirely.

Another difference we see with these offerings is their pervasiveness, with UiPath launching variants of its copilot across each platform component, including document understanding, test automation, apps, and mining.

UiPath also shared a vision for the future that we did not hear from the competition: using these generative AI capabilities to build auto-healing robots; i.e. automatically fixing aspects that have broken using bot descriptions and the AI behind Autopilot, so reducing the ongoing management cost of bots.

]]>

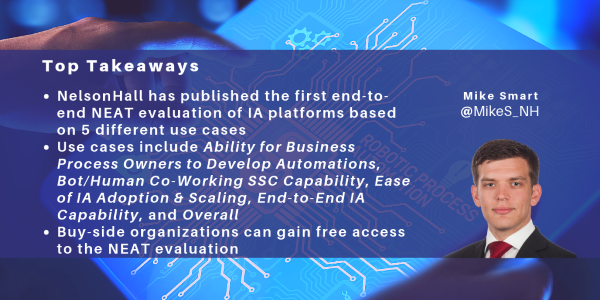

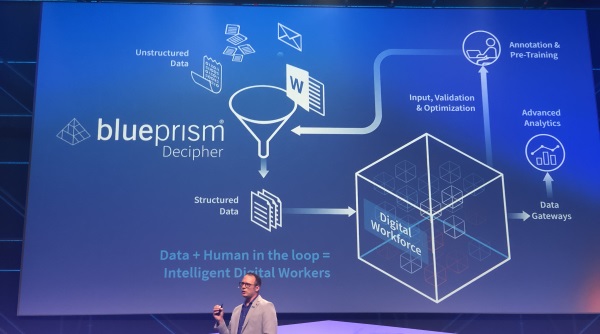

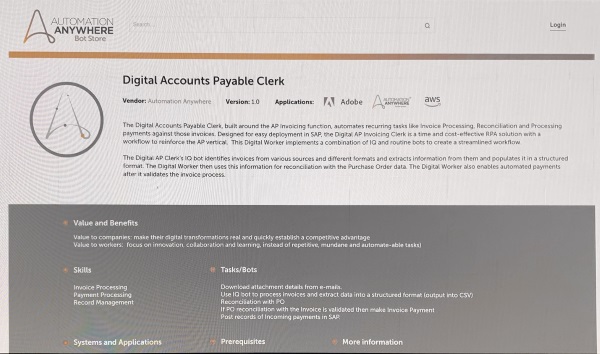

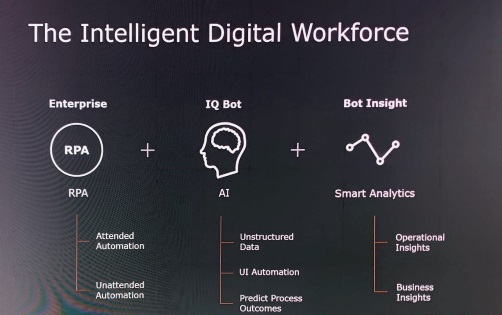

Automation Anywhere recently announced new capabilities for its platform powered by generative AI: Automation Co-Pilot for Business Users and for Automators, plus Document Automation. Here I take a look at these new capabilities and how they compare with those of other providers, and consider the wider implications of generative AI solutions for automation developers.

Automation Co-Pilot for Business Users

This is an update to Automation Co-Pilot AARI launched in October 2022, leveraging generative AI capabilities in automation assistants that can be embedded into business applications.

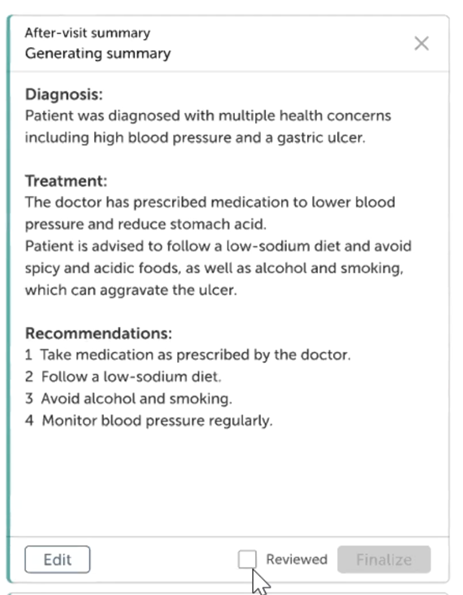

In the use case shown by Automation Anywhere, a doctor uses Co-Pilot to retrieve patient records and lab results, extracting data from pdf lab results which the doctor can validate, and then uses GPT to generate a summary of the information and editable next-action recommendations:

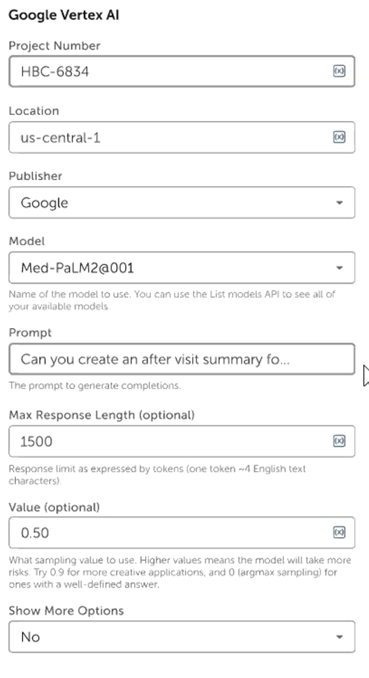

For this solution, Co-Pilot was configured with Process Composer to add the generative AI solution, select the AI model and enter the prompt for the AI. These solutions can leverage generative AI from Microsoft Azure OpenAI, Google Vertex AI (below), Amazon Bedrock, and Nvidia NeMo.

Automation Co-Pilot for Business Users is now available under a ‘bring your own license’ (BYOL) model, with Amazon Bedrock support added in Q2.

Automation Co-Pilot for Automators

Automation CoPilot for Automators aims to support automation designers in building bots by embedding Co-Pilot into the IDE. Using natural language text, users can ask the co-pilot to enter bot requirements which the co-pilot then uses to generate the skeleton of an automation flow in the designer. Users can then continue the chat with Co-Pilot to update this flow.

Following the automation skeleton's generation, Co-Pilot prompts the user to confirm whether the generated automation matched requirements as part of reinforcement learning.

Automation Co-Pilot for Automators will be available for preview in July 2023, with pricing and packaging to be announced.

Document Automation

The third new capability announced is generative AI large language models to analyze, extract, and summarize information as part of Automation Anywhere’s document automation capabilities.

The company states that large language models with minimal customization can read through large documents to find specific clauses or for a general understanding of unstructured documents.

Generative AI for Document Automation is expected to launch in Q3 2023. The company is evaluating different generative AI solutions and models, as these will be offered as an embedded solution rather than BYOL.

How does this compare with other providers?

Generative AI is undoubtedly a hot topic inside and outside the automation space, and the two big themes have been connecting to these large language models within automations and using these models for ‘automating automation’.

In the last month, we’ve seen UiPath going down similar paths with the public preview of its connectors to OpenAI and Azure OpenAI and its Clipboard AI offering which uses generative AI solutions to quickly copy information between applications.

There has also been a lot of news related to code generation, including automation code, using these large language models. These have tended to be focused on generating Python code which can then be imported into automation IDEs rather than embedded into low-code designers, as shown above with Automation Co-Pilot for Automators.

Automation Anywhere has built data protection, security, and compliance requirements into its generative AI offerings. Much has been said about security concerns with developers using generative AI models, with some organizations such as Apple banning employees from using ChatGPT over fears that IP in these conversations is being used to train the large language models.

With these integrations to third-parties, Automation Anywhere has stated that client data is secure and will not be used to train any shared models.

What will generative AI solutions mean for automation developers?

The generative AI solutions that the Automation Anywhere platform is leveraging can be very impressive and the natural question following the Automation Co-Pilot for Automators example is whether the automation space still requires developers.

To that question, NelsonHall answers yes.

Generative AI, similar to low/no code IDEs, is opening up the ability to develop automations for more business users, requiring much less intensive coding or development experience than the old IDEs from several years ago. These advances have mainly been feathers in automation developers’ caps as well as allowing citizen developers to build an automation skeleton quickly. While expanding developer resources to encompass business users who know the process can certainly help in the development of automations, early attempts at this have run into pitfalls due to poor processes being automated inefficiently, and with little investment to ensure that these automations are shared across the organization relative to a centralized automation CoE.

For larger, more complex automations that offer enterprise-wide impact, skilled automation developers are still required to translate the business requirements into automations to leverage the best-in-class methodologies, AI models, and supporting platforms that can be updated to match changing business requirements. In these situations, process understanding (task and process mining) platforms can be used to more fully understand the process and automatically create automation skeletons requiring last-mile work similar to that when using generative AI.

]]>

NelsonHall recently attended an IBM Security analyst day in London. This covered recent developments such as IBM’s acquisition of Polar Security on May 16th to support the monitoring of data across hybrid cloud estates, and watsonx developments to support the move away from rule-based security. However, a big focus of the event was the subject of risk.

For the last few years, the conversation around cybersecurity has shifted to risk, highlighting the potential holes within a resiliency posture, for example, and asking questions such as ‘if ransomware were to shut down operations for six hours, what would the implications for the business be?’

IBM and a number of other providers, therefore, have been offering ‘risk scores’ related to aspects of an organization’s IT estate. These include risk scores from IBM’s Risk Quantification Service, IBM Guardium, for risk related to the organization’s data, including its relationship with data security regulations; risk scores from IBM Verify related to particular users; and from recently acquired Randori, the company’s attack surface management solution.

Randori, acquired in June 2022, is a prime example of IBM’s strengths in understanding and reducing risks. Its two offerings, Randori Recon and Randori Attack, aim to discover how organizations are exposed to attackers and provide continuous automated red teaming of the organization’s assets.

After running discovered assets, shadow IT, and misconfigurations through Randori Attack’s red team playbooks, clients are presented with the risks through a patented ’Target Temptation’ model. In this way, organizations can prioritize the targets that are the most susceptible to attack and monitor the change in the level of risk on an ongoing basis.

IBM’s Risk Quantification service uses the NIST-certified FAIR model which decomposes risk into quantifiable components: the frequency at which an event is expected and the magnitude of the loss that is expected per event. In this manner, the service performs a top-level assessment of the client’s controls and vulnerabilities, makes assumptions such as the amount of sensitive information stolen during a breach based on prior examples, and produces a probability of loss and the costs related to that loss, including fines and judgments from regulatory bodies.

It is not the first time we have seen this model and a similar approach being taken by vendors offering cyber resiliency services. One such vendor is Unisys, who in 2018 offered its TrustCheck assessment, which used security data and X-Analytics' software to analyze the client's cyber risk posture and how they associate with financial impacts. These financial impacts were plotted against the threat likelihood of the event.

TrustCheck was used as a driver for the Unisys cybersecurity business; it related the expected loss against guidance to whether the value of securing the client's environment was greater than the cost to remediate a gap, and it conveyed this information to the C-level.

So what is the difference between IBM’s approach to risk and Unisys’ TrustCheck service?

IBM has been approaching its risk qualification from both ends – a bottom-up measuring of user, data, compliance, and the IT estate using platforms such as Guardium, Verify, and now Randori, and a top-down view within its Risk Quantification Service. At the analyst event in London, there was a clear indication that these risk scores would be looking to converge over time to provide a more accurate and consistent view of an organization’s risk. For example, using the outputs from Randori Recon to understand the client’s exposure; Guardium and Polar security to understand what data is being held and where it could travel; and Verify to understand what user access exists. A consistent, accurate view of the client’s resiliency would then be used to drive decision-making.

This convergence of risk scores will not be an immediate development. Randori has just undergone a year of development to integrate its UX into QRadar for a unified experience, and its upcoming development will include being brought into the IBM Security QRadar suite as part of an Attack Surface Management (ASM) service before a consistent risk score service is complete. Likewise, the acquisition of Polar Security needs time to bed in to the data security estate.

NelsonHall does, however, welcome any moves that result in more organizations knowing more about the risks to their business, and the financial risks associated, which has traditionally been a major stumbling block for organizations in understanding what remediation should be taken to increase security postures beyond the baseline of compliance requirements.

]]>

Process discovery and mining platforms, which examine organizations’ process data as part of transformation initiatives, have become an increasingly critical part of process automation and reengineering journeys.

Here I look at what to expect in the process discovery and mining space in 2023 and beyond.

Continuous Monitoring

Traditionally we have seen COEs use process discovery and mining to focus solely on single point-in-time process improvements as part of the transformation journey. Because of this, when an improved process is put into place the focus (and licenses) for the process discovery and mining suites are moved onto the next project.

In 2023, we predict that these solutions will be used to support more process analysis on an ongoing basis, with licenses being used on already reengineered processes to support KPI monitoring and ongoing process improvement. There are certainly features being built into the platforms and pricing models that are reenforcing this move, such as being able to use process discovery platforms to train users on parts of processes that cannot be automated, and unlimited usage licenses that aren’t tied to the amount of users or the amount of process data ingested.

In this way, process discovery and mining solutions can provide a real-time view of actual process performance, augmenting business process management (BPM) platforms.

Automation and Low Code Application Development Links

Process discovery and mining have always been great lead-ins for automation, revealing what processes are in place before automating them; however, that connection has mainly been one way, i.e. process discovery and mining platforms sending over the skeletons of a process to automation platforms to build an automation.

In 2023, we see process discovery platforms implementing more functionality in the reverse direction to take automation logs from the automation tools back into the discovered process to track the overall performance of the process on an ongoing basis, whether the steps of the process are automated or performed by a human.

Likewise, when a process cannot be fully automated and requires human effort, automation platforms are implementing low code applications to collect the necessary information. We envisage the process discovery and mining platforms not only building skeletons of the processes for automation, but also building suggestions from low code applications.

Digital Process Twins

Usually when we refer to digital twins we are talking about a digital representation of a manual process enabled by IoT as part of industry 4.0. However, at the end of last year, we saw one or two vendors moving towards the creation of digital process twins for business operations.

The digital process twin is the culmination of continuous monitoring of both a process and its automation. Using these features, process understanding solutions can be the future of BPM, providing real-time tracking of the performance of a process, and they can enable opportunities like preventative maintenance, leveraging root cause analysis to find when a process is showing signs that it is straying from the target model.

Object-Centric Process Mining

Traditional process mining ascribes a single case notation for every step of the process, but this isn’t the best fit for every process.

For example, in car manufacturing, the production of a car could be held up by the materials for the glass in the windows if single case notation is used. A car manufacturer will not be ascribing the same case notation for silicon arriving at the factory to the car that will eventually be fitted with windows made from that silicon consignment.

In object-centric process mining (OCPM) you would not use a single case notation as the only linking piece in a process. Instead, the case notation ceases to be the be-all-and-end-all of the process and each object, each aspect of the process, is tracked individually, with its own attributes, as part of a whole process.

The object-centric process could then, in the car example, relate case numbers from user issues to the vehicle, to the order they placed, to the windscreen, and to the delivery of silicon.

Such OCPM will expand the usefulness of process discovery and mining from processes that are fairly simple and related to a single case, such as a ticket number on an email, to a more complete view of the process.

***

In this quick look at the future of process discovery and mining, we acknowledge that the features described here may not be the core application of these platforms before the end of 2023, as organizations will continue to use existing functionality to target the bulk of legacy processes requiring quick fixes to reduce costs and perform one-off improvements to processes.

]]>

NelsonHall recently attended UiPath’s Forward V event in Las Vegas. The company has recently passed $1bn in Annual Recurring Revenue (ARR), up 44% y/y, enabled through its base of 10.5k clients. This includes some very large clients such as Generali, and, similar to the other tier 1 automation platform providers, an exceptionally long tail of clients with <10 bots. UiPath’s messaging focused on how enhancements to its platform will enable clients to ‘Go Big’ on automation and reach the scale of Generali. This will be critical to UiPath’s long-term growth plans.

Continuous discovery with task & process mining

As such, some of the announcements during the event focused on what UiPath calls ‘continuous discovery’. While the company gained task and process mining capabilities through the acquisitions of ProcessGold and StepShot in 2019 (see the Forward III event blog), Chief Marketing Officer Bobby Patrick stated that back then the end-to-end nature of the platform was mostly ‘on paper’ and not in production, as these components had not been fully integrated. The company now states that the platform is fully integrated, allowing users to help clients discover the as-is state of processes, take actions to optimize and automate them, and then continuously monitor them for ongoing improvement.

What, to some degree, still remains on paper is being able to harness the joint capabilities of task mining and process mining to offer a fuller definition of long complicated processes; currently, UiPath has just a few dozen clients using both components. The general feedback from clients at the event was that these components are not being used in a ‘continuous discovery’ manner (i.e. continuously assessing a process over time for further opportunities for automation and efficiency gains). Instead, licenses are being cycled round the client organization with different departments receiving a point-in-time assessment of the process. We expect this to remain the case going forward in all but very rare scenarios, until task mining licenses decrease in cost.

Another recent acquisition is Re:infer, which brings communications mining functionality. Unlike task or process mining, Re:Infer’s focus is solely on electronic communications within client organizations. The platform can analyze emails, chat logs, social messages, and more, to create actionable business data and new opportunities for automation using its NLP engine. Then, when building and running automations, this NLP engine can be used on inbound electronic communications to trigger automations within UiPath.

The use of Re:Infer is in its early days within UiPath, being only in private preview, and (somewhat like ProcessGold and SnapShot in 2019) has not been fully integrated into the platform. NelsonHall envisions that as Re:infer becomes more integrated into the general UiPath platform suite, it will have the opportunity to become the main NLP engine; for example, being integrated into task mining to better understand emails and documents that employees are working on.

Go Big: theme of UiPath’s Forward V event

Enhancing automation development environments

The UiPath platform offers three development environments: Studio is an advanced product for experienced developers, while Studio X and Studio Web are for less experienced developers.

The most notable recent release is Studio Web, a browser-based automation development environment. The concept is not especially new, mirroring Automation Anywhere’s Automation 360 web IDE, but is a welcome one, especially as the company is continuously improving its offering to citizen developers.

Projects being developed on Studio Web can be edited by users on Studio and Studio X, with a target use case being a citizen developer logging into Studio Web, creating an automation, then (when the automation is ready to be proliferated) it can be sent to Studio for a client’s automation CoE to ensure the robustness of the bot.

Incorporated into Studio Web along with Studio and Studio X are ‘document understanding’ advancements. Along with overall enhancements to the extraction engine to support the likes of signature, barcode, QR code, and improved table extraction, UiPath has added native support for verticalized pre-built models for processing tax, insurance, and transportation documents.

Separate to its automation Studios, UiPath has expanded the capabilities of its Apps component to simplify the creation of lightweight applications, and for the first time, allow for the creation of public-facing apps.

UiPath also announced a partnership with outsystems, another low-code application provider. While UiPath believes its low-code application builder is suitable for a wide variety of applications, it has no plans to support high-complexity systems such as CRM applications. In these use cases, the company believes outsystems can fill that gap.

One app created using UiPath Apps and customizable by clients is Automation Launchpad, a springboard to guide client citizen developers through their company’s automation program; for example, providing information on how they may submit an idea.

Lastly, UiPath has added a connection builder to its Integration Service, its enabler of API automation. Similar to the process and task mining capabilities, Integration Service is now supported across all UiPath components, allowing users to use APIs in addition to the suites’ traditional UX automation capabilities. The connection builder allows clients to build connections to in-house and specialized industry solutions.

Conclusion

These advancements will be critical in enabling more use cases for the platform or allowing for easier identification of automation opportunities and more processes that can be automated. Specifically:

- The process understanding module’s integration will support more organizations opting to discover and understand the processes that are ripe for automation

- Re:infer will, as it integrates into the platform, allow for the detection of electronic conversations that have lots of inefficient back and forth

- Public-facing apps opens up the possibility for organizations to develop lightweight citizen-led ways to interact with customers

- And connection builder allows for more API automation.

These features, along with a change in GTM within sales to support clients who represent the best opportunities to become power users, will be the key to supporting the array of clients that currently don’t take advantage of the wide capabilities of the platform. In speaking with clients at Forward V, each and every one had bandwidth capacity issues with, in most cases, hundreds of opportunities for automation to be identified, but a lack of capacity to automate them all. Therefore, NelsonHall believes the immediate growth drivers will be the features that reduce time to make automations; for example, encouraging more citizen development with Studio Web or within testing automation, and migrating test signatures from QA testing platforms such as HP ALM.

]]>

Capgemini has launched a new digital transformation service, One Operations, with the specific goal of driving client revenue growth.

One Operations: Key Principles

Some of One Operations’ principles, such as introducing benchmark-driven best practice operations models, taking an end-to-end approach to operations across silos, and using co-invested innovation funds, are relatively well established in the industry. However, what is new is building on these principles to incorporate an overriding focus on delivering revenue growth. The business case for a One Operations assignment focuses on facilitating the client’s revenue growth and taking a B2B2C approach focused on the end customer, emphasizing the delivery of insights that enable client personnel to make earlier decisions focused on the enterprise’s customers.

Capgemini’s One Operations account teams involve consulting and operations working together, with Capgemini Invent contributing design and consulting and the operational RUN organization provided by Capgemini’s Business Services global business line.

Implementing a One Operations philosophy across the client organization and Capgemini is achieved through shared targets to reduce vendor/client friction and co-invested innovation funds. One Operations assignments involve setting joint targets with a continuously replenished co-invested innovation fund of ~10–15% of Capgemini revenues used to fund digital transformation.

One Operations is very industry-focused, and Capgemini is initially targeting selected clients within the CPG sector, looking to assist them in growing within an individual country or small group of countries by localizing within their global initiatives. The key to this approach is demonstrating to clients that it understands and can support both the ’grow’ and ’run’ elements of their businesses and having an outcome-based conversation. Capgemini is typically looking to enable enterprises to achieve 4X growth by connecting the sales organization to the supply chain.

Assignments commence with working sessions brainstorming the possibilities with key decision-makers. The One Operations client team is jointly led by a full-time executive from Capgemini Invent and an executive from Capgemini’s Business Services. The Capgemini Invent executive remains part of the One Operations client team until go-live. The appropriate business sector expertise is drawn more widely from across the Capgemini group.

One Operations assignments typically have three phases:

- Deployment planning (3–6 months) to understand the processes and associated costs and create the business case

- Deployment (6–15 months) to create the ’day one’ operating model

- Sustain, involving go-live and continuous improvement.

At this stage, Capgemini has two live One Operations assignments with further discussions taking place with clients.

Using End-to-End Process Integration to Speed Up Growth-Oriented Insights

Capgemini’s One Operations has three key design principles:

- Re-inventing the organization by embedding a growth mindset by reducing business operations complexity and enabling an AI-augmented workforce to focus on their customers and higher-value services

- Increasing the level of end-to-end integration by improving data accuracy and incorporating AI to achieve ’touchless forecasting & planning’ and enable better decisions and speed of innovation. ’Frictionless’ end-to-end integration is used to support more connected decisions and planning across the value chain

- Transforming at speed and scale.

These transformations involve:

- Shaping the strategic transformation agenda through defining the target operating model based on peer benchmarks and using standardized operating model design, assets, and accelerators

- Using a digital-first framework incorporating One Operations pre-configured digital process evaluation and digital twins

- Deployment of D-GEM technology accelerators, including AI-augmented workforce solutions and Capgemini IP such as Tran$4orm and ranging from platforms to microtools

- Augmented operations using Capgemini Business Services.

Changing the mindset within the enterprise involves freeing personnel from tactical transactional activities and providing relevant information supporting their new goals.

Capgemini aims to achieve the growth mindset in client enterprises by enabling an integrated end-to-end view from sales to delivery, facilitating teams with digital tools for process execution and growth-oriented data insights. Within this growth focus, Capgemini offers an omnichannel model to drive sales, augmented teams to enable better customer interactions, predictive technology to identify the next best customer actions, and data orchestration to reduce customer friction.

One Operations also enables touchless planning to improve forecast accuracy, increase the order fill rate, reduce time spent planning promotions, and accelerate cash collections to reduce DSO, while improving promotions accuracy and product availability are also key to revenue growth within CPG and retail environments.

Shortening Forecasting Process & Enhancing Quality of Promotional Decisions: Keys to Growth in CPG

The overriding aim within One Operations is to free enterprise employees to focus on their customers and business growth. In one example, Capgemini is looking to assist an enterprise in increasing its sales within one geography from ~$1bn to $4bn.

The organization needed to free up its operational energies to focus on growth and create an insight-driven consumer-first mindset. However, the organization faced the following issues:

- 70% of its planning effort was spent analyzing past performance, and ~100 touches were required to deliver a monthly forecast

- Order processing efficiency was below the industry average

- Approx. 30% of its trucks were leaving the warehouse half-empty

- Launching products was taking longer than expected.

Capgemini took a multidisciplinary approach end-to-end across plan-to-cash. One key to growth is the provision of timely information. Capgemini is aiming to improve the transparency of business decisions. For example, the company has rationalized the coding of PoS data so that it can be directly interfaced with forecasting, shortening the forecasting process from weeks to days and enhancing the quality of promotional decisions.

Capgemini also implemented One Operations, leveraging D-GEM to develop a best-in-class operating model resulting in a €150m increase in revenue, 15% increase in forecasting accuracy, 50% decrease in time spent on setting up marketing promotions, and a 20% increase in order fulfillment rate.

]]>

Analytics has often been run as a series of periodic and siloed exercises. However, to respond to their customers in the smartest, fastest, most efficient manner, WNS perceives that organizations increasingly need to run their analytics always-on, in almost real-time, and on an enterprise rather than siloed basis. To do this and become ‘insights-led enterprises’, organizations’ analytics need to be supported by a suitable underlying enterprise data ecosystem, typically cloud-based.

WNS has had a strong Data & Analytics practice for many years. In the past, the scope of WNS’ analytics-led engagements was somewhat limited and frequently priced on an FTE basis. WNS now seeks to significantly broaden the scope, powered by data management, Artificial Intelligence (AI), and cloud, and aggressively incorporate alternative and outcome-based pricing models. WNS has now repositioned to work more upstream on client engagements and participate in larger data lake transformations, rebranding its Data & Analytics practice as WNS Triange.

Repositioning and Expanding Horizons as an ‘End-to-End Industry Analytics’ Player

This repositioning aims to establish WNS with a clear identity as ‘an end-to-end industry analytics player’ delivering outcomes and not just personnel and assisting the practice in targeting transformational activity for functional business heads, CDOs, CTOs, and CDOs outside of WNS BPS engagements. It also assists the Data & Analytics practice in establishing a stronger identity within WNS and attracting talent in a challenging talent market.

WNS is also aiming to change the scope of engagements from running individual use case analytics in silos to assisting organizations with the broader management of their underlying data ecosystems and rolling out analytics on an enterprise basis at scale.

Accordingly, WNS Triange is building data & analytics capability on the cloud, together with data/AI Ops capability to run large-scale data operations and governance at scale. These capabilities are supported by an Analytics CoE that brings together “best practices” on cloud, data, and AI, together with associated governance mechanisms and domain expertise.

Investing in High-End consulting and Hyperscaler-Certified IP

WNS Triange currently has ~4,500 personnel and is being restructured into three components:

- Triange Consult. WNS Triange is placing much greater emphasis on up-front consulting than previously and is increasingly recruiting and locating senior consultants onshore operating from its design labs. WNS has also built framework assets in support of Triange Consult in the past two years, covering areas such as analytics and AI strategy, data strategy, and data quality & governance strategy, together with domain-specific consulting

- Triange NxT. WNS continues to focus on the creation of accelerators. These include SKENSE, Unified Analytics Platform, Insurance Analytics in a BOX, Emerging Brands and Trends, InsighTRAC, and Datazone.ai

- Triange CoE, for analytics project and service implementation.

WNS has invested in platforms to address intelligent cloud data ops as well as in analytics AI models. These Triange NxT platforms assist WNS in delivering speed-to-execution and speed-to-value since these elements are pre-built models with tested connectors to third-party data and are being cloud-certified with the necessary governance and built-in security protocols.

For example, the Triange NXT Insurance Analytics Platform provides pre-trained AI and non-AI based analytics models in support of insurance analytics related to claims, pricing, underwriting, fraud, customer marketing, and service & retention. These models are underpinned by APIs to leading insurance platforms, connectors with workflow systems, ML Ops, and what-if analyses. WNS also incorporates platforms from partnerships with start-ups and specialized data providers as part of its prepackaged solutions.

Key WNS platforms within Triange NxT include Skense for data extraction and contextualization, Insurance Analytics Platform, InsighTRAC for procurement insights, and SocioSEER, a social media analytics platform. WNS is currently finalizing the certification of each platform on AWS and Azure and making them available in cloud marketplaces.

SKENSE platform based solutions have been built to address a range of use cases across finance & accounting, customer interaction services, legal services and procurement, as well as banking & financial services, shipping & logistics, healthcare, and insurance.

Increasing Use of Co-Innovation and Non-FTE Pricing Models

WNS Triange revenues have grown ~25% over the past year, and WNS is increasing its use of co-innovation and non-FTE pricing models.

For example, WNS has deployed its AI/ML platform to capture the quality control data from the various plants of an FMCG company, create summaries, change the data into a suitable format for generating insights, and return the summary notes and insights to the FMCG company’s data lake.

This resulted in an 82% reduction in processing cost per document compared to what had previously been a very manual process.

WNS undertook the development of this IP largely at its own expense and now owns it, with the client paying some elements of the development fee and a licensing fee. In addition, WNS will pay the initial client a percentage of the revenue if this IP is sold to other CPG companies.

WNS helped an Insurance client automate the process of identifying subrogation opportunities in the Claims processing workflow. WNS used MLOps frameworks to identify recovery opportunities based on historical data and predict opportunities in the current transactional data with higher chances of recovery. This helped the client in improving the recovery rates by multiple percentage points.

Elsewhere, WNS is working with a media client to transform the enterprise into a digital media agency and reinvent its traditional approach to processes such as media planning and customer segmentation. Here, WNS is assisting the company with multiple data & analytics initiatives. In some cases, this involves the Triange Consult practice, in others provision of platforms, and in others, the application of the Triange CoE approach.

For example, WNS Triange Consult is helping the company establish an appropriate cloud architecture and organize its data appropriately, establish how to run machine learning ops, and identify the appropriate design for a complete reporting center.

The company’s data has traditionally been paper-based, so WNS NxT is using platforms to digitize its data and provide insights for real-time decision-making. WNS is also helping the company set up its training infrastructure for data & analytics.

This repositioning is underlined by systemic structural changes that will enable WNS to adopt a more consultative and enterprise-scale approach to analytics. While many organizations will still address analytics on a siloed case-by-case basis, and these use cases remain important, WNS now has the structure to go beyond individual use cases, further augmenting its traditional strengths in domain-based analytics and assisting organizations in adopting more systematic approaches to establishing and scaling their enterprise analytics infrastructures end-to-end with enterprise-level data, analytics, and AI.

]]>

Digital transformation and the associated adoption of Intelligent Process Automation (IPA) remains at an all-time high. This is to be encouraged, and enterprises are now reinventing their services and delivery at a record pace. Consequently, enterprise operations and service delivery are increasingly becoming hybrid, with delivery handled by tightly integrated combinations of personnel and automations.

However, the danger with these types of transformation is the omnipresent risk in intelligent process automation projects of putting the technology first, regarding people as secondary considerations, and alienating the workforce through reactive communication and training programs. As many major IT projects have discovered over the decades, the failure to adopt professional organizational change management procedures can lead to staff demotivation, poor system adoption, and significantly impaired ROI.

The greater the organizational transformation, the greater the need for professional organizational change management. This requires high workforce-centricity and taking a structured approach to employee change management.

In the light of this trend, NelsonHall's John Willmott interviewed Capgemini's Marek Sowa on the company’s approach to organizational change management.

JW: Marek, what do you see as the difference between organizational change management and employee communication?

MS: Employee communication tends to be seen as communicating a top-down "solution" to employees, whereas organizational change management is all about empowering employees and making them part of the solution at an individual level.

JW: What are the best practices for successful organizational change management?

MS: Capgemini has identified three best practices for successful organizational change management, namely integrated OCM, active and visible sponsorship, and developing a tailored case for change:

- Integrated OCM – OCM will be most effective when integrated with project management and involved in the project right from the planning/defining phase. It is critical that OCM is regarded as an integral component of organizational transformation and not as a communications vehicle to be bolted on to the end of the roll-out.

- Active and visible sponsorship – C-level executives should become program sponsors and provide leadership in creating a new but safe environment for employees to become familiar with new tools and learn different practices. Throughout the project, leaders should make it a top priority to prove their commitment to the transformation process, reward risk-taking, and incorporate new behaviors into the organization's day-to-day operations.

- Tailored case for change – The new solution should be made desirable and relevant for employees by presenting the change vision, outlining the organization's goals, and illustrating how the solution will help employees achieve them. It is critical that the case for change is aspirational, using evidence based on real data and a compelling vision, and that employees are made to feel part of the solution rather than threatened by technological change.

JW: So how should organizations make this approach relevant at the workgroup and individual level?

MS: A key step in achieving the goals of organizational change management is identifying and understanding all the units and personnel in the organization that will be impacted both directly and indirectly by the transformation. Each stakeholder or stakeholder group will likely find itself in a different place when it comes to perspective, concerns, and willingness to accept new ways of working. It is critical to involve each group in the transformation and get them involved in shaping and driving the transformation. One useful concept in OCM for achieving this is WIIFM (What's In It For Me), with WIIFM identified at a granular level for each stakeholder group.

Much of the benefit and expected ROI is tied to people accepting and taking ownership for the new approach and changing their existing ways of working. Successfully deployed OCM motivates personnel by empowering employees across the organization to improve and refine the new solution continually, stimulating revenue growth, and securing ROI. People need to be both aware of how the new solution is changing their work and that they are active in driving it – and thanks to that, they are actively making the organization a "powerhouse" for continuous innovation.

How an enterprise embeds change across its various siloes is very important. In fact, in the context of AI, automatization is not only about adopting new tools and software but mostly about changing the way the enterprise's personnel think, operate and do business.

JW: How do you overcome employees' natural fear of new technology?

MS: To generate enthusiasm within the organization while avoiding making the vision seem unattainable or scary, enterprises need to frame and sell transformations incorporating, for example, AI as evolutions of something the employees are doing already, not merely as "just the next logical step" but reinventions of the whole process – from both the business and experience perspective. They need to retain the familiarity which gives people comfort and confidence but, on the other hand, reassure them that the new tool/solution adds to their existing capability, allowing them to fulfill their true potential – something that is not automatable.

]]>

The value of automation using tools such as RPA, and more recently intelligent automation, has been accepted for years. However, there is still a danger in many automation projects that while each project is valuable in its own right, they become disconnected islands of automation with limited connectivity and lifespans. Accordingly, while elements of process friction have been removed, the overall end-to-end process can remain anything but friction-free.

Capgemini has developed the "Frictionless Enterprise" approach in response to this challenge, an approach the company is now applying across all Capgemini’s Business Services accounts.

What is the Frictionless Enterprise?

The Frictionless Enterprise is essentially a framework and set of principles for achieving end-to-end digital transformation of processes. The aim is to minimize friction in processes for all participants, including customers, suppliers, and employees across the entire value chain of a process.

However, most organizations today are far from frictionless. In most organizations, the processes were designed years ago, before AI achieved its current maturity level. Similarly, teams were traditionally designed to break people up into manageable groups organized by silo rather than by focusing on the horizontal operation they are there to deliver. Consequently, automation is often currently being used to address pain points in small process elements rather than transform the end-to-end process.

The Frictionless Enterprise approach requires organizations to be more radical in their process reengineering mindsets by addressing whole process transformation and by designing processes optimized for current and emerging technology.

Capgemini’s Business Services uses this approach to assist enterprises in end-to-end transformation from conception and design through to implementation and operation, with the engine room of Capgemini Business Services now focused on technology, rather than people, for transaction processing.

A change in mindset is critical for this to succeed. Capgemini is increasingly encouraging its clients to move from customer-supplier relationships to partnerships around shared KPIs and adopt dedicated innovation offices.

The five fundamentals of the Frictionless Enterprise

Capgemini views the Frictionless Enterprise as depending on five fundamentals: hyperscale automation, cloud agility, data fluidity, sustainable planet, and secure business.

Hyperscale automation

This ultimately means the ability to reach full touchless automation. Hyperscale automation depends on exploiting artificial intelligence and building a scalable and flexible architecture based on microservices and APIs.

Cloud agility

While the frictionless transformation approach is designed to work at the sub-process level and the overall process level, it is important that any sub-process changes are a compatible part of the overall journey.

Cloud agility emphasizes improving the process in ways that can be reused in conjunction with future process changes as part of an overall transformation. So any changes made to sub-processes addressing immediate pain points should be steps on the journey towards the final target end-to-end operating model rather than temporary throwaway fixes.

Accordingly, Capgemini aims to bring the client the tools, solutions, and skills that are compatible with the final target transformation. For example, tools must be ready to scale, and at present, API-based architectures are regarded as the best way to implement cloud-native integration. This has meant a change in emphasis in the selection and nature of relationships with partners. Capgemini now spends much more time than it used to with vendors, and Capgemini’s Business Services has a global sales officer with a mandate to work with partners. In addition, this effort is now much more focused, with Capgemini concentrating its efforts on a limited set of strategic partners. All the solutions chosen are API native, fully able to scale, AI at the core, and cloud-based. One example of a Capgemini partner is Kryon in RPA, since it can record processes as well as automate them.

Data fluidity

It's important within process transformations to use both internal and external data, such as IoT and edge data, efficiently and have a single version of the truth that is widely accessible. Accordingly, data lakes are a key foundational component in frictionless transformations.

However, while most enterprises have lots of data to leverage, they also have lots of data points that need to be fixed. Master data management is critical to successful transformation and remains an important part of transformed operations.

Digital twins are key to removing process friction and are used as the interface between how the business currently operates and how it needs to operate in the future. As well as providing an accurate view of the reality of current process execution, process mining also speeds up process transformation, enabling transformation consultants to focus on evaluation and prioritization of opportunities for change rather than collecting process data. Process mining can also help with maintaining best practice compliance post-transformation by monitoring how individual agents are using their systems, with the potential to guide them through proactive online training and removing the need to compensate for agent inefficiencies with automation.

Sustainable planet

It's also becoming extremely important when reviewing end-to-end processes to consider their impact on the planet across the whole value chain, including suppliers. For example, this covers both carbon impact and social aspects such as diversity, including ensuring a lack of bias in AI models. Sustainability is becoming increasingly important in financial reporting, and in response, Capgemini has added sustainability into its integrated architecture framework.

Secure business

Enterprises cannot undertake massive transformations unless they are guaranteed to be secure, and so the Frictionless Enterprise approach encompasses account security operations and cybersecurity compliance. Similarly, change management is of overwhelming importance within any transformation project, and the Frictionless Enterprise approach focuses on building trust and transparency with customers and partners to facilitate the transformation of the value chain.

A client example of Frictionless Enterprise adoption

Capgemini is helping a CPG company to apply the Frictionless Enterprise approach to its sales & distribution planning. The company was already upper quartile at each of the individual process elements such as supply planning and distribution planning in isolation, but the overall performance of its end-to-end planning process was inadequate. Accordingly, the company looked to improve its overall inventory and sales KPIs dramatically by reengineering its end-to-end order forecasting process. For example, improved prediction would help achieve more filled trucks, and improved inventory management has a direct impact on sustainability and levels of CO2 production.

The CPG company undertook planning quarterly, centrally forecasting orders. However, half of these central forecasts were subsequently changed by the company's local planners, firstly because the local planners had more detailed account information and did not believe the centrally generated forecasts, and secondly because quarterly forecasts were unable to keep up with day-to-day account developments.

So there was a big disconnect between the plan and the reality. To address this, Capgemini undertook a process redesign and proposed daily planning, entailing:

- Planning overnight daily with machine learning used to forecast orders based on the levels of actual orders up until that point

- Removing local planners' ability to change order forecasts but making them responsible for improving the quality of the master data underpinning the automated forecasts, such as identifying the correct warehouse used to deliver to a particular customer.

This process redesign involved comprehensive automation of the value chain and the use of a data lake built on Azure as the source of data for all predictions.

Capgemini has now been awarded a 5-year contract with a contractual goal of completing the transformation in three years.

]]>

Part 1 of this blog focused on Capgemini’s structured approach to workforce motivation and upskilling when transitioning to a Frictionless Enterprise that leverages a digitally augmented workforce. This second part looks at how, when adopting a digitally augmented workforce, it is critical to ensure optimized routing of incoming queries and transactions between humans and machines, and to ensure that the expected RoI is delivered from automation projects.

Intelligent Routing of Transactions between Workforce and Machines

Intelligent query access and routing is essential to successfully deploy a hybrid human/machine workforce to achieve the optimal allocation of transactions between personnel and machines. For example:

- For a North American manufacturer, Capgemini combined RPA with multiple microservices from AWS and Google and Capgemini code to classify 41 categories of incoming accounts payable queries. If a classification is possible, the query is allocated either to a human or machine. Queries that go the machine route have their text analyzed using NLP, and actions are then triggered to collect the information necessary to answer the query. If the confidence level in the response exceeds 95%, the answer is sent automatically. If not, then the query and response are sent to a human for review and confirmation. This is an example of a digitally augmented workforce

- For another client, Capgemini reduced the cost per query of procure-to-pay queries from 180 cents to 17 cents by using a digitally augmented workforce. The company’s AI Query Classifier uses NLP and ICR to extract the relevant information from the unstructured text, validate the query and automate ticket creation. Its AI Workload Distribution then orchestrates the process and decides whether each case goes through automated or human resolution

- Elsewhere, a client had a large team serving billable transactions in 24 languages, but 30%-40% of the transactions they received were not relevant to this team. Capgemini implemented 90% automated identification & indexing for 21 of these 24 languages. The data is validated, further data retrieved where necessary, and then the data revalidated. Business rules are then applied to identify whether the transaction is handled manually or automated. Savings of ~75% of the total effort were achieved.

The use of machine translation is becoming increasingly important in these situations, and Capgemini is now working on machine language translation to reduce its dependency on nearshore centers employing large numbers of native speakers in multiple languages.

Preformed automation assets are also important in combining best practices and intelligent automation. Here, Capgemini has introduced 890 by Capgemini. This catalog of analytics services enables organizations to access analytical and AI solutions and datasets from within their own organization, from multiple curated third-party providers, and from Capgemini. Capgemini has focused on the provision of sector-specific solutions and currently offers ~110 sector solutions.

Introduction of Digital Twins Ensure Delivery of RoI from Technology Deployment

Capgemini’s approach to data-driven process discovery and excellence is based on combining process mining using process logs, task capture and task mining using desktop recorders, productivity analytics for each individual, and use of digital twins.

Tools used include Fortress IQ, Celonis, and Capgemini’s proprietary Prompt tool. These tools are combined with Capgemini’s Digital Global Enterprise Model (D-GEM) platform to incorporate best-in-class processes and frictionless processing.

Digital twins are used to progress process discovery beyond digital snapshots and provide ongoing process watching, assessment, and definition of opportunities. It also allows Capgemini to simulate the real returns that will be achieved by the introduction of technology by highlighting any other process constraints that will be exposed and limit the expected RoI from automation initiatives.

Capgemini’s approach to process digital twin introduction is:

- To start with business mining, a combination of process mining, task mining, and Capgemini’s D-GEM platform

- This is followed by benchmarking the processes against D-GEM

- Then simulating the impact of introducing technology, calculating the business case, and ensuring that the result achieved is close to what was anticipated by identifying any potential process bottlenecks that might reduce the technology deployment’s savings. These simulations also help in accelerating the approval of intelligent automation projects and the scaling of digital transformation within the enterprise, since they increase management confidence in the certainty of project outcomes

- This is followed by continuous improvement and identifying ongoing areas for improvement.

Also, during the pandemic, it is increasingly difficult to run onsite workshops for automation opportunity identification. It is becoming increasingly necessary to use digital twin process mining of individuals’ machines to remotely build business cases. This development may become standard practice post-pandemic if it proves to be a faster and more reliable basis for opportunity identification than interviewing SMEs.

Conclusion

In conclusion, the deployment of technology is arguably the easy part of intelligent process automation projects. Two more challenging elements have always been interpreting and routing unstructured transactions and queries and identifying and delivering RoI. Capgemini’s Frictionless Enterprise approach – that leverages a digitally augmented workforce – addresses both these challenges by combining technologies for classification and routing unstructured transactions and queries, and introducing process digital twins to ensure RoI delivery.

You can read Part 1 of this blog here.

]]>

This is Part 1 of a two-part blog looking at Capgemini’s Intelligent Process Automation practice. Here I examine Frictionless Enterprise, Capgemini’s framework for intelligent process automation that focuses on the adoption of a digitally augmented workforce.

Digital transformation has been high on enterprise agendas for some years. However, COVID-19 has given the drive to digital transformation even greater impetus as organizations have increasingly looked to reduce cost, implement frictionless processing, and decouple their increasingly unpredictable business volumes from the number of servicing personnel required.

For Capgemini, this has resulted in unprecedented increases in Intelligent Process Automation bookings and revenue in 2020.

Frictionless Enterprise & the hybrid workforce

There is always a danger in intelligent automation projects of regarding people as secondary considerations and addressing the workforce through reactive change management. As part of its Frictionless Enterprise approach, Capgemini's framework for intelligent process automation stresses the adoption of a digitally augmented workforce, and aims to avoid this pitfall by maintaining high workforce-centricity, stressing the need to involve employees in the automation journey by taking a structured approach to workforce communication and upskilling.

Capgemini's Intelligent Automation Practice emphasizes the workforce communication and reskilling needed to achieve a digitally augmented or hybrid workforce. This involves putting humans at the center of the hybrid workforce and motivating and reskilling them.

The personnel-related stages in the journey towards a Frictionless Enterprise that leverages a digitally augmented workforce used by Capgemini are:

- Design of the augmented workforce. On the design side, it is important to ask, "what is the impact of technology on the workforce and how should the organization's competency model change?" How is the workforce of the future defined?

- Building the augmented workforce

- Creating the right context.

Client cases

In one client example, Capgemini assisted a major capital markets firm in designing and building its digitally augmented workforce, using a four-step process:

- Resource profiling

- Dedicated curriculum creation

- Pilot on 15% of resources

- Augmented workforce scaling.

Step 1: Involved identifying personnel with a statistics or mathematics background who could be potential candidates for, say, ML data analysis. These potential candidates were then interviewed and tested to ensure their ability, for example, to run a Monte Carlo simulation.

Having established the desired job profiles, these personnel were allocated to various job families, such as automation business analysts, data analysts, power users, and developers, with developers split into low code/no-code developers and advanced developers.

Step 2: A dedicated curriculum was created in support of each of the job families. However, to ensure the training was focused and to increase employee engagement and retention, each employee was tasked up-front with clearly defined projects to be undertaken following training. This kept the training relevant and avoided a demotivating disconnect between training and deployment

Step3: 15% of the entire team were then trained and deployed in their new roles. This figure ranges between 5% and 15% depending on the client, but it is important to deploy on a sub-set of the workforce before rolling out more widely across the organization. This has the dual advantages of testing the deployment and creating an aspirational group that other employees wish to join

Step 4: Roll-out to the wider labor force. The speed of roll-out typically depends on the sector and company culture.

Capgemini has also helped a wealth management company enhance its ability to supply information from various sources to its traders by enhancing its capabilities in data management and automation. In particular, this required upskilling its workforce to address shortages of data, automation, and AI skillsets.

This involved a 3-year MDM Ops modernization program with dedicated workforce augmentation and upskilling for digitally displaced personnel, starting with three personnel groups.

This resulted in an average processing speed increase of 64% and an estimated data quality increase of 50%, and the approach was subsequently adopted more widely within the company's in-house operations.

AI Academy Practitioner's Program

Capgemini has created its AI Academy Practitioner's Program, an "industrialized approach" to AI training to support workforce upskilling. This program is mentor-led and customizable by sector and function to ensure that it supports the organization's current challenges.

The program's technical elements include:

- "Qualifying" (6 hours over 3 days) for personnel who only need to be aware of the potential of AI

- "Professional" (10-hours per week for 4 weeks), where personnel are provided with low code tools to start developing something

- "Expert" (10-hours per week for 4 weeks), incorporating custom AI & ML model building.

The program's functional courses include:

- Data literacy (4 hours over 4 days)

- Business functional (10-hours per week for 4 weeks)

- Business influencer (CXO) (15 hours over 3 days)

- Intelligent process automation (15 hours over 3 days), highlighting combining automation stack with AI.

Conclusion

In conclusion, the deployment of technology is arguably the easy part of intelligent process automation projects. A more challenging element has always been to motivate the workforce to come forward with ideas and enthusiastically adopt change. Capgemini's Frictionless Enterprise approach – that leverages a digitally augmented workforce – addresses this challenge by adopting an aspirational approach to upskilling the workforce and removing the disconnect between training and deployment.

]]>

The following is a discussion between Nagendra (Nag) P Bandaru, President, Wipro Limited – Global Business Lines-iCore, and John Willmott, NelsonHall CEO, covering lessons learned in 2020 and success factors for 2021. Nag is responsible for Infrastructure and Cloud Services, Digital Operations and Platforms, and Risk Services and Enterprise Cybersecurity – three key strategic business lines of Wipro Limited that help global clients accelerate their digital journeys. Together, these business lines generate revenues of USD 4.3 billion with an overall operation of approximately 100,000+ employees across 50 global delivery locations. He is a member of Wipro’s Executive Board, the apex leadership forum of the company. Nag is based in Plano, Texas.

JW: Nagendra, what was the most important lesson that you learned in 2020 in the face of COVID-19 and its impact on business?

Nag: What 2020 taught us was that no plans, great systems, or great processes would work in an unprecedented environment. It was a year of being resilient – of creating hope when you have no hope. For us, that was the starting point.

In a world where everything is constrained, how do you keep your operations live? Such situations often lend to the danger of overemphasizing technology, frameworks, systems, and processes while underestimating people. But, I believe that it is most important to have a resilient team. During BCP implementation in response to government-imposed lockdowns across the globe, the team’s leadership and decisive action have been the cornerstone of our success. Some of our employees were stuck at home without access to their secure office desktops. Our teams worked closely with local administrations to obtain the appropriate government permissions and ship laptops to employees’ homes – often crossing inter-state borders – through ground transport. Then, we had to set up the software and security remotely and change all the dongles to direct-to-home broadband. At that time, it was about keeping things simple and working out the basics. We saw simplicity being redefined by the pandemic. In getting the basics right, the bigger stuff began to fall in place. The experience taught us to focus on the basics.

Our global teams ensured that we moved from BCP to Business as usual within a few weeks of the lockdown, with 93% of our colleagues working securely from their homes. This proved that business resilience is about having the right talent. My leaders also emerged strong and worthy in those extremely difficult times. It is important to build future leaders whose core capability is the ability to anticipate and prevent risk. This ability to manage risk is the biggest leadership trait a company needs to be successful.

JW: The IT and BPS industry was remarkably resilient in 2020 and has a very promising outlook for 2021. What do you see as the main growth opportunities?